As gamers or tech enthusiasts, we may be tempted to upgrade our system with each new generation. While it’s nice to enjoy maximum performance all the time, there’s a hefty price to pay. So, what happens if you wait a couple of years or more before a refresh? I’ve waited on the sidelines for half-a-decade before making wholesale changes to my rig, and with GPU prices belatedly coming down, I finally decided to bite the bullet. Here’s the when, what and why of my own personal upgrade story.

In the beginning

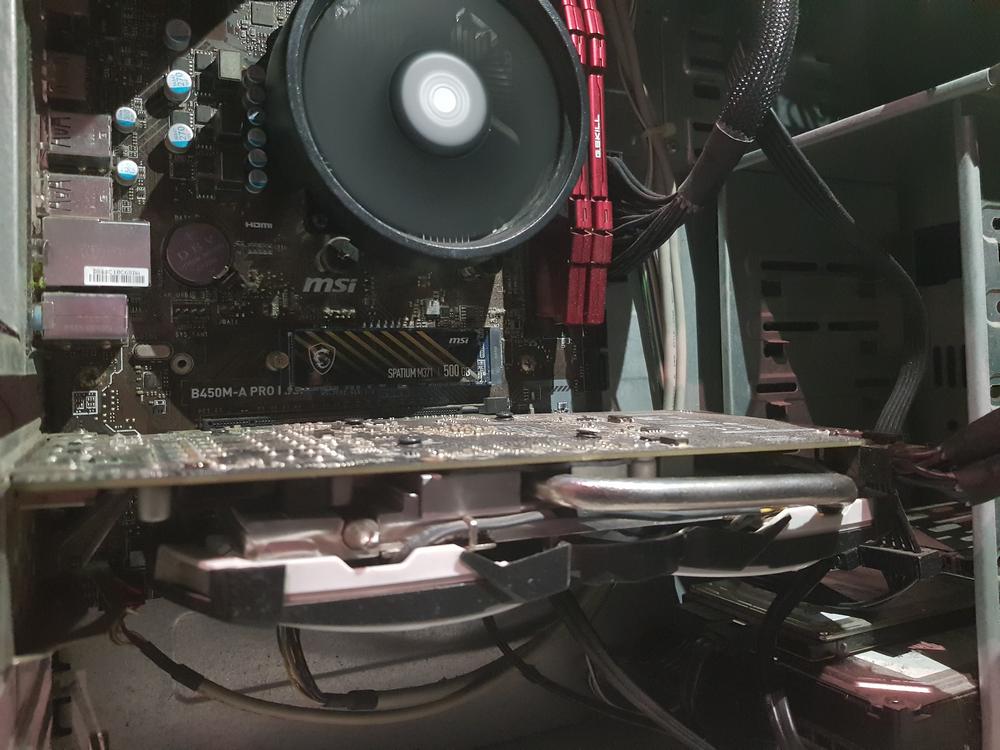

After four years of loyal service – notice I tend not to upgrade all that often – my Core i5-6600K and MSI RX 570 Armor OC were due for a much-needed refresh. I started with the very foundation, opting for an Asus TUF Gaming X670E-Plus WiFi motherboard, Ryzen 5 7600X processor (non-X, sadly, wasn’t yet released), plus the cheapest supported RAM at the time, 32GB of G.Skill DDR5-6000 CL36 Trident Z5 Neo RGB.

Building blocks in place, this left me waiting patiently for price drops on current RX 7000 or RTX 4000 series cards. Seemed the day would never come, but if you’ve been keeping a close eye on Club386 deals, you’ll know that component prices are all heading in the right direction. For me, the trigger point was Sapphire’s RX 7900 XT Pulse hitting $720 using an $80 Amazon coupon. A perfect opportunity to see how much performance can be obtained from this particular upgrade.

A meaty upgrade, and one I hope will eliminate a lot of the complications that come with running older gear. Since the Polaris (RX 500) generation dates back to April 2017, with me purchasing my card in February 2019, there was only one way to keep up with the uptick in video game quality; overclocking. But not only that, undervolting was also necessary to avoid paying an RX 570’s worth of electricity cost!

After several weeks of tweaking, I settled for 1,380MHz core and 2,100MHz memory clocks, netting around 10 per cent uplift depending on game. At stock, the card ran at 1,268MHz (1.15V) core and 1,750MHz memory. A welcome uplift, but unfortunately such settings developed a tendency to stutter over time, most likely as a result of thermal paste degradation. After a while I was no longer able to hit those figures, dropping back to a stable 1,350MHz (1.08V) core and 2,080MHz (0.85V) memory, with core temps climbing to 82°C on a hot day.

Thankfully I went with an 8GB model back in the day, allowing decent scope to tinker with higher textures and resolutions on my QHD, 144Hz monitor, but something with a little more oomph is clearly needed to fully enjoy 2560×1440 gameplay. That isn’t to say the RX 570 belongs on the scrap heap, mind, on the contrary said card is currently enjoying a second life in another PC, hopefully for years to come, so good job AMD and MSI on this one.

Before and after

Focussing specifically on gains achieved in the graphics department, my testing kept all game and Windows settings unchanged between both cards. Generally speaking, I had near-max graphics settings, while motherboard, CPU, and memory are kept the same for comparison purposes – TUF Gaming X670E-Plus, Ryzen 7600X in Eco mode, 32GB of DDR5-6000 – and before you question Eco mode, note it is hot as hell here in Morocco so the lower temps really do matter.

Do be aware that my RX 570 scores may be a smidge higher than others you encounter online as I lowered the tessellation levels a bit inside the driver, to extract a couple extra frames from this ageing GPU. Yes, I really do like to tinker.

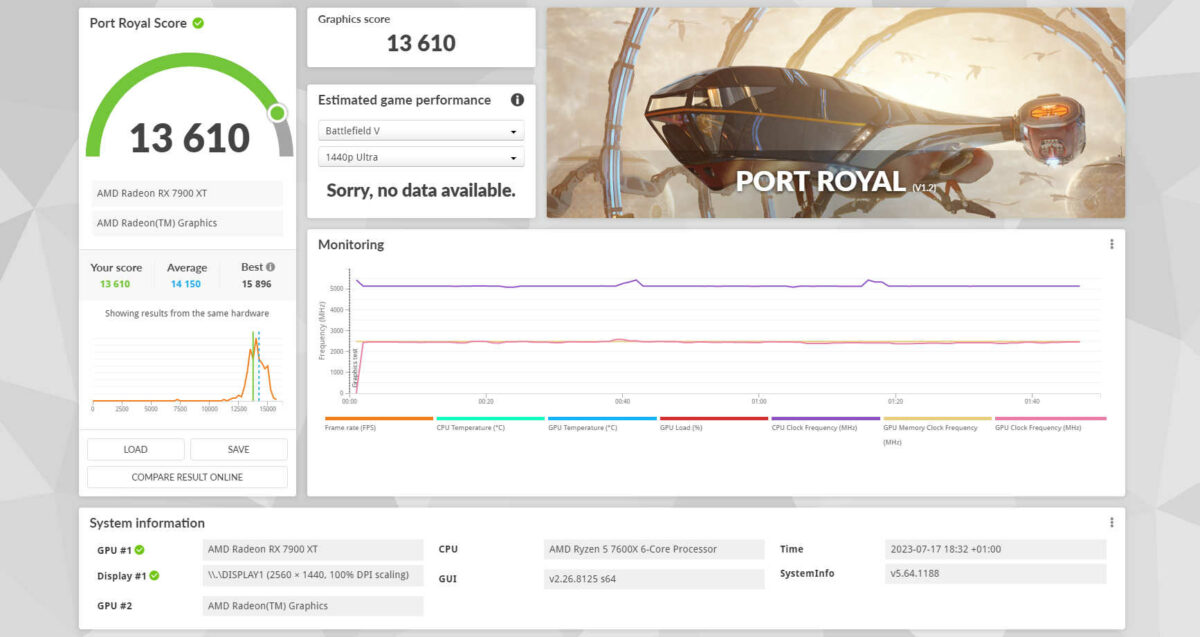

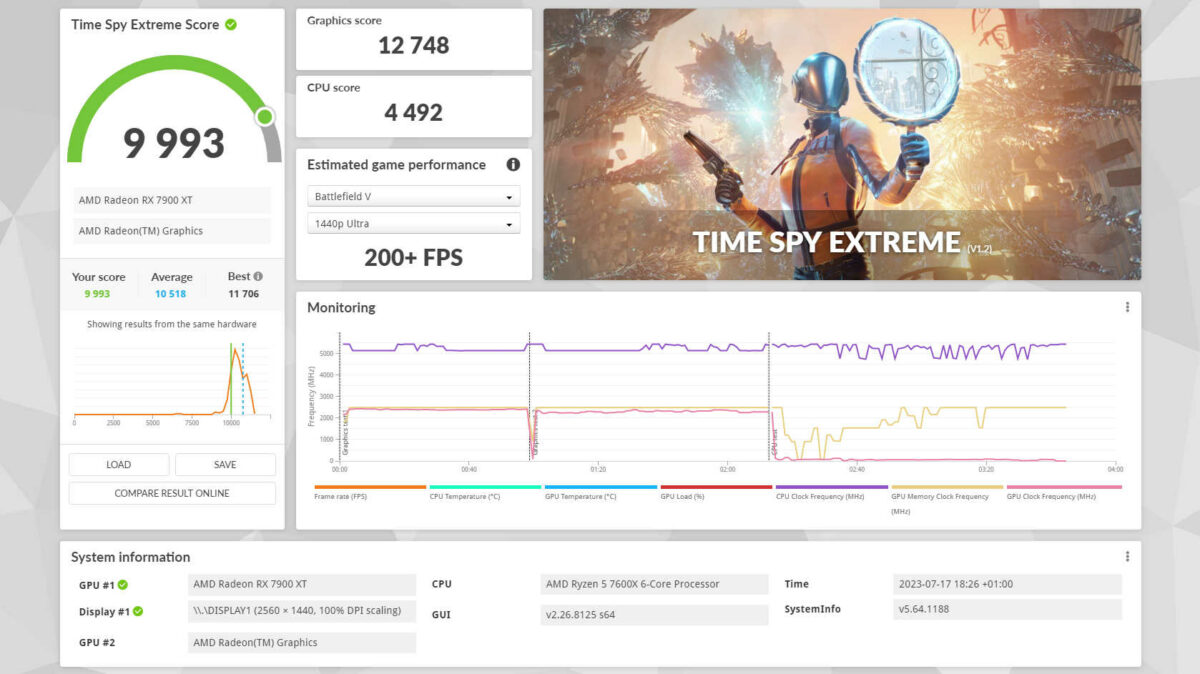

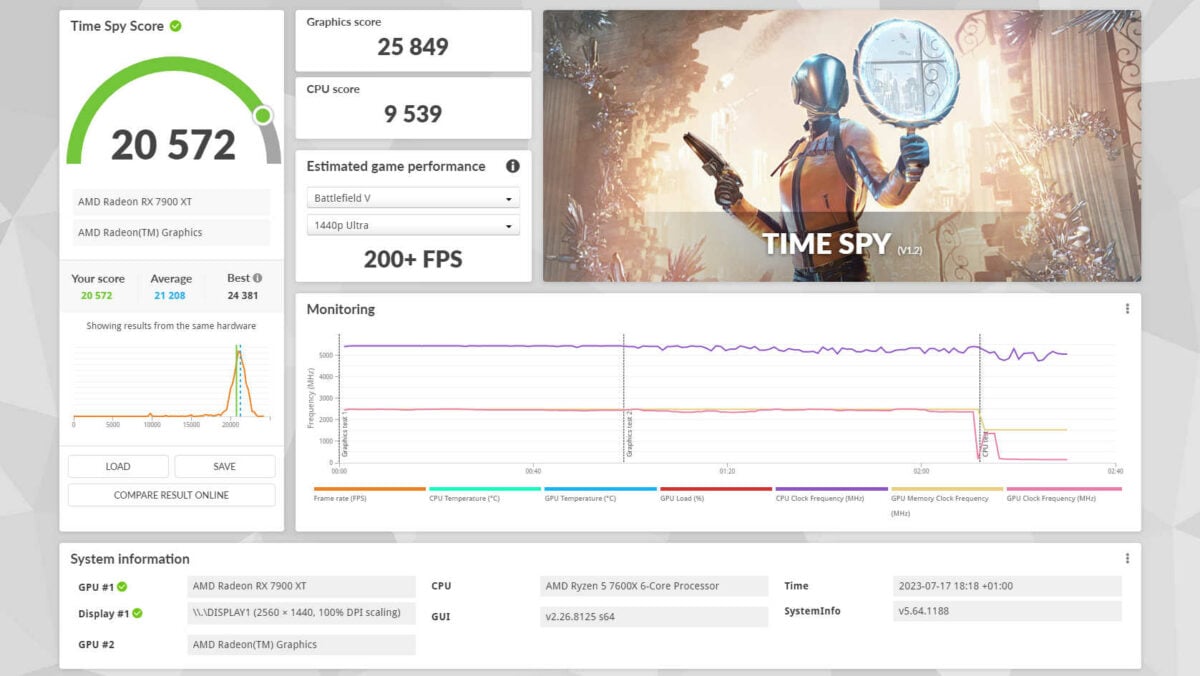

With all of that aside, let’s talk numbers, starting with the mandatory 3DMark benchmark. Running the Time Spy test saw the RX 7900 XT deliver 25,849 GPU score against 4,126 on the RX 570, with Time Spy Extreme respectively spitting 12,748 and 1,902 points. More than a 5x uplift, hell yeah!

Moving to the games I’m currently enjoying, in World of Tanks the RX 7900 XT netted an average 322.4 frames per second against 77.9 using the RX 570. Cyberpunk 2077 FPS skyrocketed from 25.5 to 139.5, while Destiny 2 climbs from 79.6 to a welcome 186.4. The below table encompasses a range of titles averaging a stellar performance uplift of 285 per cent.

| Game | RX 570 (FPS) | RX 570 System Power (W) | RX 7900 XT (FPS) | RX 7900 XT System Power (W) | FPS Uplift (%) |

|---|---|---|---|---|---|

| Anno 1800 | 21.5 | 313 | 99.5 | 465 | 362 |

| Cyberpunk 2077 | 25.5 | 320 | 139.5 | 474 | 447 |

| Destiny 2 | 79.6 | 328 | 186.4 | 400 | 134 |

| Enlisted | 72.3 | 315 | 315.2 | 470 | 335 |

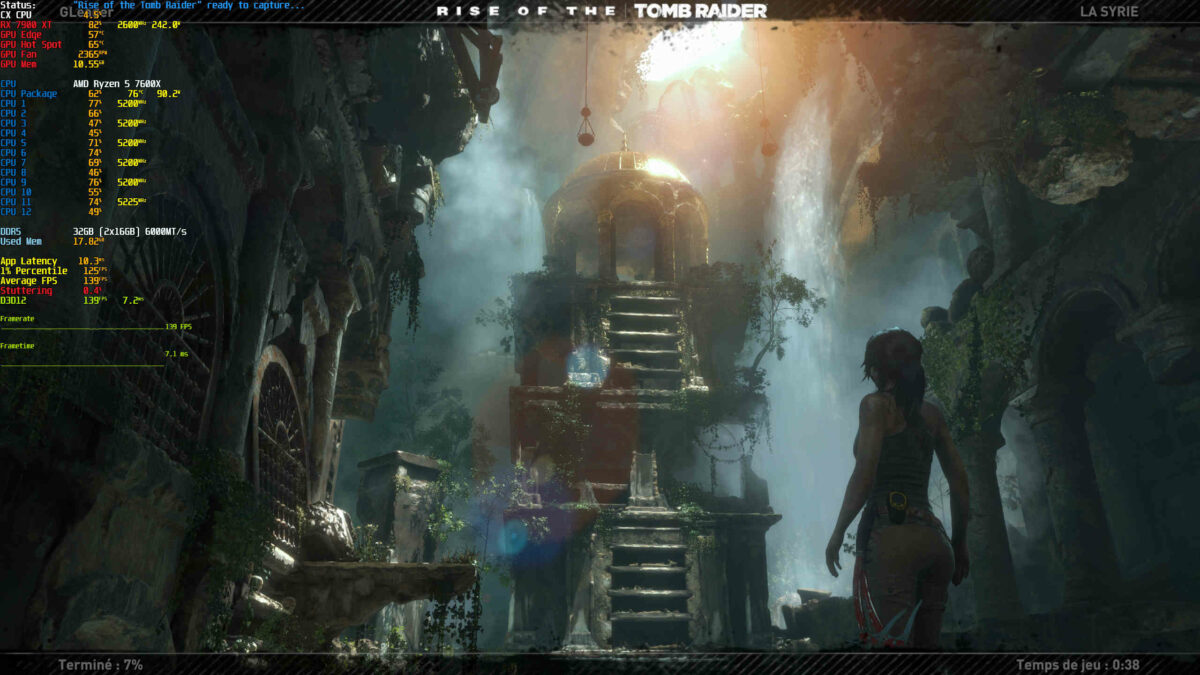

| Rise of the Tomb Raider | 52.4 | 324 | 212.4 | 477 | 305 |

| Spider-man Remastered | 43.3 | 326 | 144.4 | 430 | 233 |

| War Thunder | 90.0 | 314 | 300.5 | 466 | 233 |

| World of Tanks | 77.9 | 318 | 322.4 | 477 | 313 |

| World War Z | 82.0 | 330 | 248.0 | 402 | 202 |

In-game framerate is but one part of the equation. Noise and heat output can be equally important, and during use the RX 570 was a turbojet, constantly running at full throttle just to keep the GPU at 82°C. The RX 7900 XT on the other hand keeps reasonably cool and quiet even during (+30°C) hot summer days, with 74°C edge and 86°C hot-spot reads. Things got even better after undervolting/locking fps on this beast – more on that later – with some games not requiring the fans to spin up at all. My only complaint now is the 100 extra watts of heat dumped into my room, but them’s the breaks.

Up to 447 per cent improvement on recent games is insane, transforming a slide show into smooth QHD gameplay, and that’s without taking into account hardware ray tracing, which simply isn’t available on the older card, preventing the launch of titles like Metro Exodus Enhanced Edition or Minecraft RTX.

Max-out games

While it’s important to know how much performance an upgrade brings, the idea behind it is also to max-out graphics settings and framerates. Having to stack up games until getting a system able to run them properly had the benefit of avoiding badly optimised titles, as waiting just a couple of months can mean more stability. Wait a few years and enjoy the benefit of purchasing said games plus all relevant DLCs at discounted prices. Best things come, as they say.

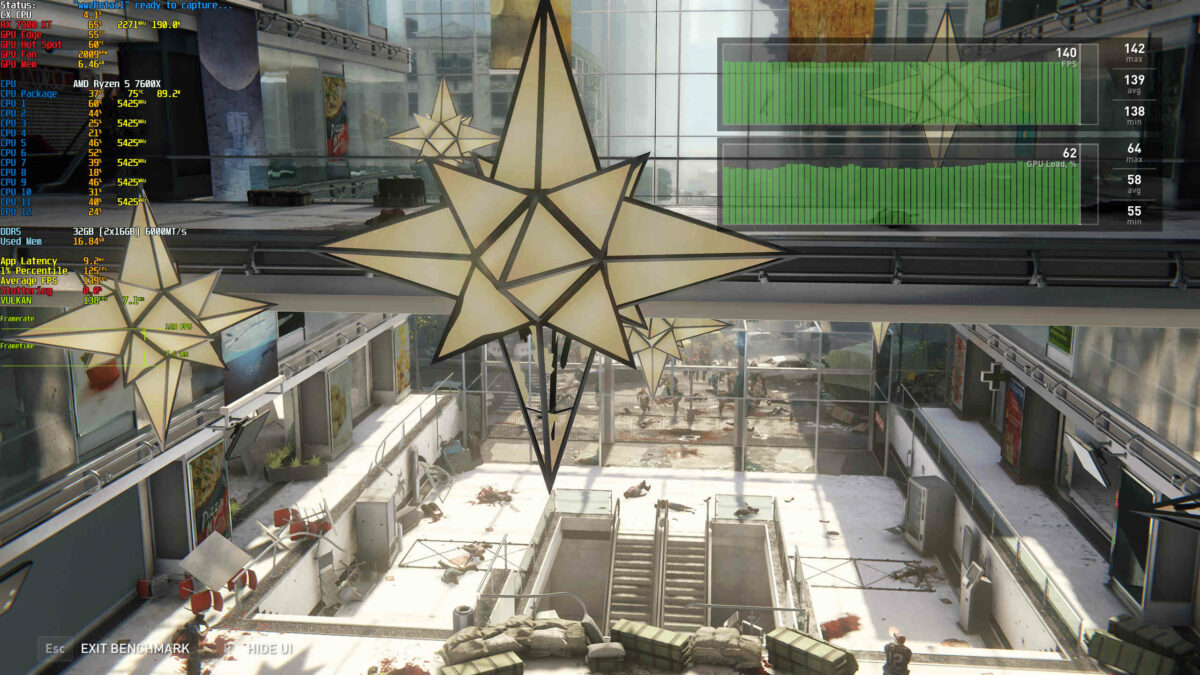

Due to my display’s 144Hz limit and the power of the AMD RX 7900 XT GPU, nearly all tested games delivered well beyond 144 frames per second at the native 2560×1440 resolution, wasting power and causing unnecessary high temps. For this reason, I choose to lock the maximum framerate to 140fps using AMD’s Adrenaline Software. The result is a stable 140fps in most games with all settings maxed out, while leaving room for any unsuspected high demands caused by explosions or other VFXs.

QHD at 144Hz is how I’m currently enjoying these titles, and will keep doing so until a display upgrade. A 240Hz OLED is on the wish list, but once again, I’ll be waiting a while for prices to come down! Give it another four years…

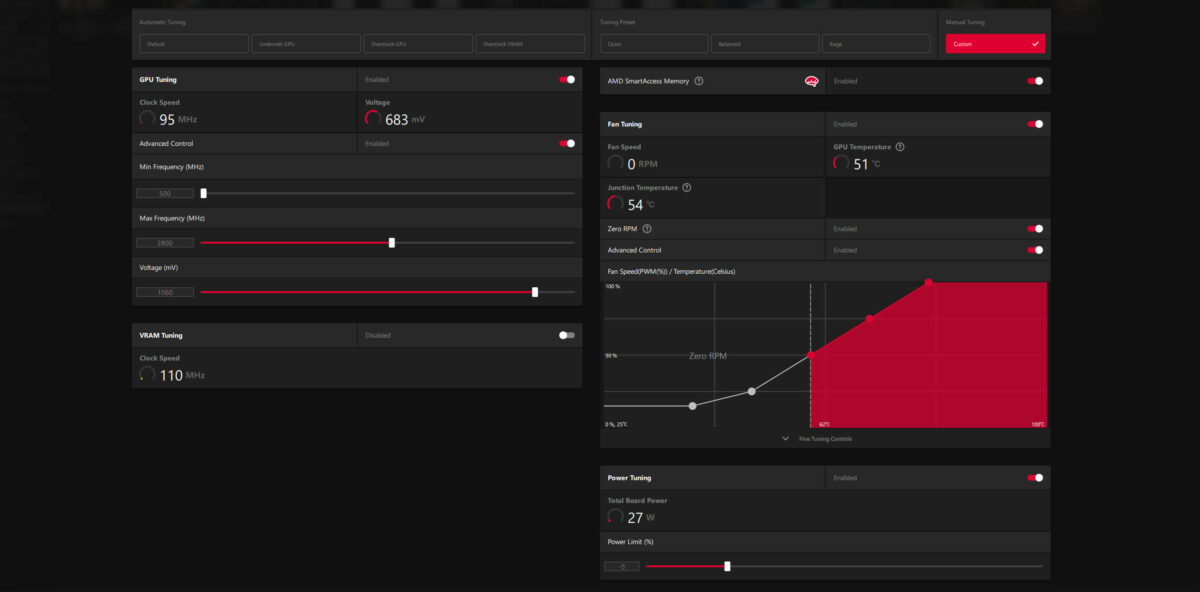

Undervolting and capping fps

Having hundreds of frames per second is nice and all, but if I’m hit with a hefty electricity bill at the end of the month, I’d rather not, especially as my display only goes up to 144Hz. This made the choice pretty simple, lock frames at 140fps and lower the GPU core voltage a bit. Why not 144? Because in my experience you ought to leave a little headroom for fps spikes, to avoid screen tearing.

To do so, I limited maximum core frequency to 2,800MHz at 1.06V, with power set to -5 per cent. -10 worked for most games, though it did cause instability in Enlisted. For now, I’ve left memory untouched as GDDR6 is quite efficient anyway, and a bad underclock can cause a heap of problems from lower performance to system crashes.

The frame cap and undervolting combo brought system power consumption down as low as 300W from 466W in War Thunder, delivering a completely stable 140fps. For reference, the RX 570 was only able to reach 90fps while gobbling 314W total system power. This reduction alone is enough to offset the consumption of my Xbox Series X console. A perfect example of architectural improvements.

However, when games require more oomph, the GPU works harder to draw the necessary frames, causing consumption to hit 320W in World War Z, 370W in Enlisted, and 400W in Rise of the Tomb Raider. Even so, these are still far from the uncapped stock GPU settings that hovered around 470W.

| Game | RX 7900 XT Stock System Power (W) | RX 7900 XT Undervolt System Power (W) | Power Reduction (%) |

|---|---|---|---|

| Destiny 2 | 400 | 375 | 6 |

| Enlisted | 470 | 370 | 21 |

| Rise of the Tomb Raider | 477 | 400 | 16 |

| War Thunder | 466 | 300 | 36 |

| World of Tanks | 477 | 300 | 37 |

| World War Z | 402 | 320 | 20 |

Up to a 37 per cent saving in power consumption is significant, and even mundane tasks show improvement; with a paused YouTube video in Firefox I recorded 100W for the RX 7900 XT system against 110W for the RX 570.

My theory regarding this higher idle power consumption using the RX 570 build is that the older Radeon doesn’t support VP9 video decoding, necessary for YouTube. And since I tend to always have a (paused) YouTube tab open, the CPU is continuously ready to software decode said video. Same happens using VLC with some file formats. Note to anyone owning GPUs that can’t decode VP9; you can force YouTube to send an H264 format using browser add-ons, which should help lower CPU load.

Also note, my rig would use even less power were it not for the eight 120mm fans. Sounds a lot, but when ambient temps are routinely above 30°C, I’d rather have better cooling for these expensive parts!

Final thoughts

So, was the upgrade worth it? Yes, without doubt. I love not having to dial-down graphics settings or wonder if I can run a game, be it due to insufficient memory or unsupported technology, and though GPU prices may soon become even more attractive – I’d have settled for a hypothetical RX 7800 XT with 16GB of memory for $500 – the timing felt right for a serious uptick in gaming potential.

It’s funny what can trigger such an outlay – I mainly upgraded because my older card started to overheat too often, signalling repasting is long overdue – but having made the move, I anticipate my Radeon RX 7900 XT will last me a good four years if not more. At the very least, upgrading from Radeon RX 570 to RX 7900 XT has allowed my QHD 144Hz monitor to stretch its legs.

Sapphire RX 7900 XT Pulse

Built on the groundbreaking AMD RDNA 3 architecture with chiplet technology, AMD Radeon RX 7900 XT graphics deliver next-generation performance, visuals, and efficiency at 4K and beyond. Read our review.