Intel has just showcased its Xeon 6+ processors at the Mobile World Congress in Barcelona, with huge counts of up to 288 E-Cores. Codenamed Clearwater Forest, and targeted at network and cloud infrastructures, the new CPUs feature a multi-chiplet design combining 12 compute tiles, resulting in a highly dense core arrangement.

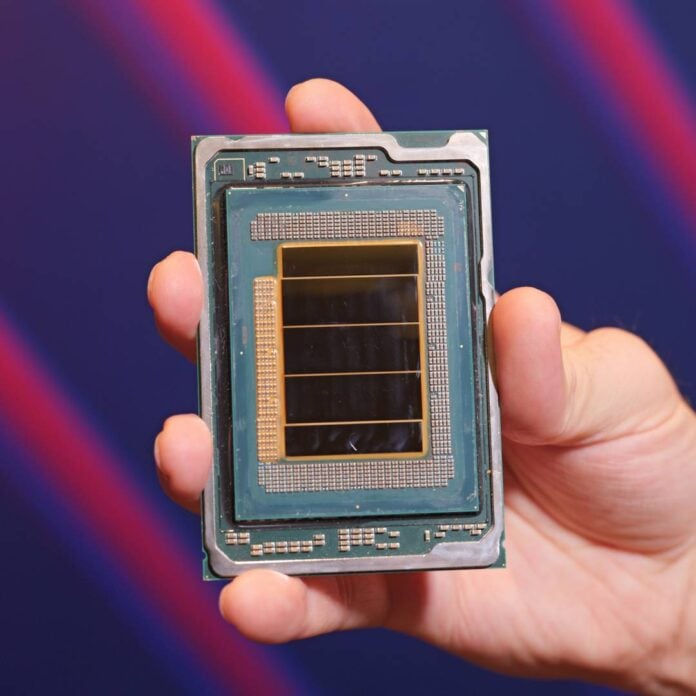

The company is positioning Xeon 6+ in its AI-ready networking portfolio, targeting on-site AI inference/edge deployments as networks transition toward 6G. To prime it for the demands of upcoming communication standards, Intel has used its high-bandwidth, on-chip fabric 2.5D EMIB links, and Foveros Direct 3D die stacking technologies, to combine multiple compute tiles in one package.

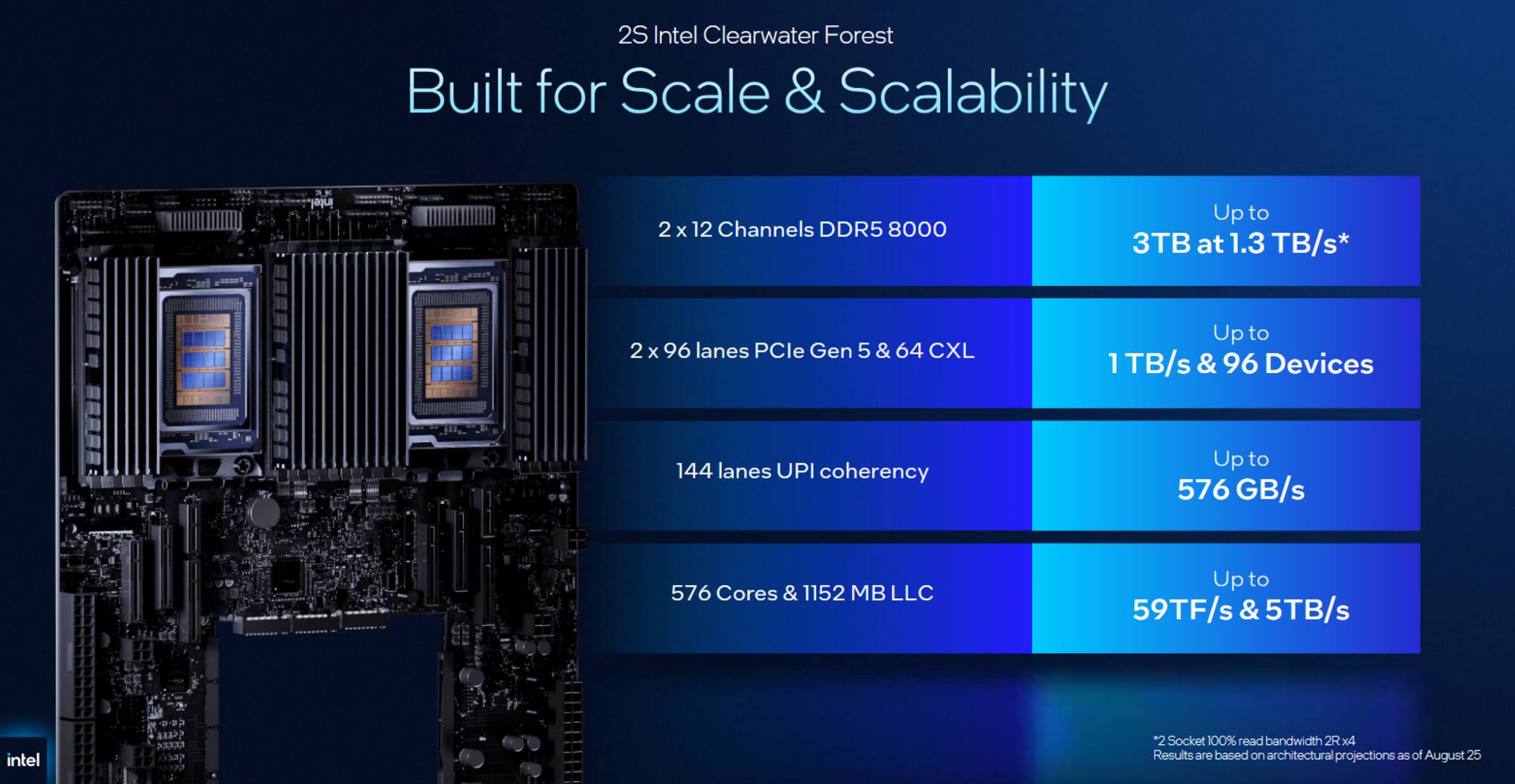

Together, these technologies enable Intel to combine 12 compute tiles made using its 18A node, with three active base tiles made using its 7nm-class (Intel 3) process and two I/O tiles manufactured on its advanced 10nm-class (Intel 7) fabrication process. Each compute tile can be further broken into six modules containing four Darkmont E-cores, resulting in 24 cores per compute tile, 288 cores per CPU, or 576 cores on dual-socket platforms.

Each one of these cores gets access to 64KB instruction cache, a wider front end than before, and a larger 416-entry out-of-order window for improved parallelism. Quad-core groups share 4MB of L2 cache, complemented by 576MB of last-level cache across the CPU. Execution resources have also been increased to improve parallel integer and vector throughput.

Moving to the I/O tiles, we have two chiplets, each packing eight accelerators, 48 PCIe Gen 5 lanes, 32 CXL 2.0 lanes, and 96 UPI 2.0 lanes – the brand’s point-to-point interconnect for linking up Xeon CPUs. In other words, a Clearwater Forest CPU offers 16 accelerators, including four Intel QuickAssist Technology, four Intel Dynamic Load Balancers, four Intel Data Streaming Accelerators, and four Intel In-Memory Analytics Accelerators, alongside 96 PCIe Gen 5 lanes, 64 CXL 2.0 lanes, and 192 UPI lanes. For memory, Intel advertises support for 12 memory channels and up to DDR5-8000 modules. Accelerators aside, these I/O tiles seem identical to those used in Intel’s Granite Ridge chips.

Compared to last-gen Xeon 6700E CPUs, the new Xeon 6+ processors provide double the core count, with Intel claiming a 17% increase in instructions per clock (IPC) per core, 5x more last-level cache, and a 20% faster memory speed, with dual socket implementations allowing up to 3TB of DDR5 memory. Regarding power consumption, Intel lists a TDP range of 300-500W for Xeon6+.

Intel plans to release its Xeon 6+ processors in the first-half of 2026, offering network and data centre operators a platform capable of handling hundreds of VMs per socket.