And then there was one. In an unprecedented climbdown, Nvidia tore-up its RTX 40 Series line-up by cancelling the poorly named GeForce RTX 4080 12GB, leaving a 16GB model to stand alongside all-conquering GeForce RTX 4090.

A bold move, and though the correct decision was made, reputational damage has been done. The hive mind of the Internet doesn’t forget, and for all of Ada Lovelace’s might, ensuing conversation has revolved around cancelled SKUs and iffy power connectors.

Nvidia GeForce RTX 4080 Founders Edition

£1,269 / $1,199

Pros

- Beats every last-gen card

- DLSS 3 is impressive

- Best-in-class efficiency

- Incredibly cool and quiet

- Smashes 2K120 or 4K60

Cons

- $1,199 x80 Series GPU

- Shuns small-form-factor

Club386 may earn an affiliate commission when you purchase products through links on our site.

How we test and review products.

A shame, really, as the two GPUs headlining the latest generation are seriously quick. RTX 4090 is the best graphics card that plays in a league of its own – rival AMD has already waved the white flag, conceding defeat at the ultra-high-end – and if our time with RTX 4080 16GB has taught us anything, it’s that the Ada Lovelace architecture happens to scale pretty darn well.

Arriving at the same $1,199 price point as RTX 3080 Ti, the hulking RTX 4080 offers genuine attraction to enthusiast gamers who’ve watched from the sidelines in years of chip shortages and crypto-fuelled scalping. Outside of sizeable performance gains, we’re informed that stock levels bode well for users seeking a holiday-season upgrade, and interestingly, Nvidia has opted to use the exact same cooler as RTX 4090.

Yes, it’s laughably large, resulting in memorable memes, but when you attach a vast cooler designed for a 450W GPU to one that sips 320W, the end result is the coolest, quietest and most composed high-end reference design we’ve ever tested. You’re itching to see the results, we know, but first thing’s first, a recap on what makes RTX 40 Series tick.

A Chip Off The Old Block

Those of you craving benchmark numbers can skip right ahead – they’re worth the wait – but for the geeks among us, let’s begin with all that’s happening beneath RTX 40 Series’ hood.

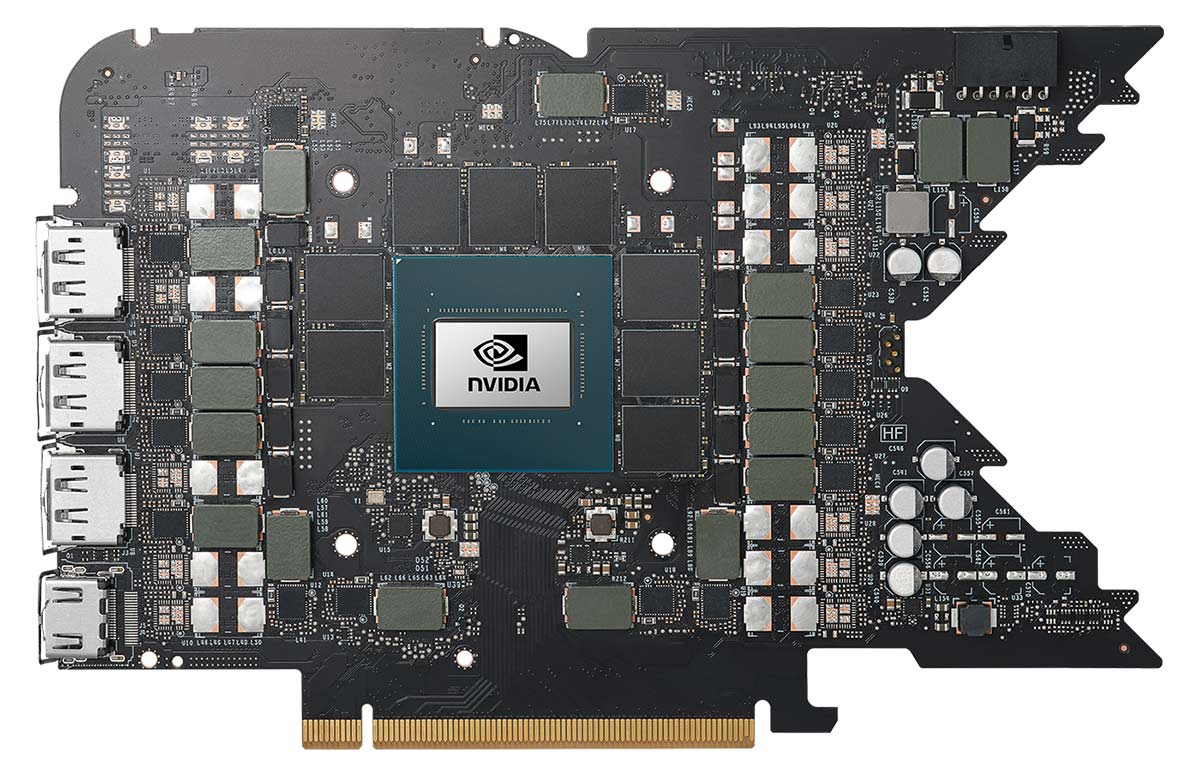

What you’re looking at is one of the most complex consumer GPUs to date. Nvidia’s 608mm2 die, fabricated on a TSMC 4N process, packs a scarcely believable 76.3 billion transistors. Putting that figure into perspective, the best GeForce chip of the previous 8nm generation, GA102, measures 628mm2 yet accommodates a puny 28.3 billion transistors. Nvidia has effectively jumped two nodes in one fell swoop, as Samsung’s 8nm process is more akin to 10nm from other foundry manufacturers.

We’ve gone from flyweight to heavyweight in the space of a generation, and a 170 per cent increase in transistor count naturally bodes well for specs. A full-fat die is home to 12 graphics processing clusters (GPCs), each sporting a dozen streaming multiprocessors (SMs), six texture processing clusters (TPCs) and 16 render output units (ROPs). Getting into the nitty-gritty of the block diagram, each SM carries 128 CUDA cores, four Tensor cores, one RT core and four texture units.

All told, Ada presents a staggering 18,432 CUDA cores in truest form, representing a greater than 70 per cent hike over last-gen champion, RTX 3090 Ti. Plenty of promise, yet to the frustration of performance purists, Nvidia chooses not to unleash the full might of Ada in the first wave. The initial trio duo of GPUs shapes up as follows:

| GeForce | RTX 4090 | RTX 4080 | 12GB | RTX 3090 Ti | RTX 3080 Ti | RTX 3080 12GB |

|---|---|---|---|---|---|---|

| Launch date | Oct 2022 | Nov 2022 | Mar 2022 | Jun 2021 | Jan 2022 | |

| Codename | AD102 | AD103 | GA102 | GA102 | GA102 | |

| Architecture | Ada Lovelace | Ada Lovelace | Ampere | Ampere | Ampere | |

| Process (nm) | 4 | 4 | 8 | 8 | 8 | |

| Transistors (bn) | 76.3 | 45.9 | 28.3 | 28.3 | 28.3 | |

| Die size (mm2) | 608.5 | 378.6 | 628.4 | 628.4 | 628.4 | |

| SMs | 128 of 144 | 76 of 80 | 84 of 84 | 80 of 84 | 70 of 84 | |

| CUDA cores | 16,384 | 9,728 | 10,752 | 10,240 | 8,960 | |

| Boost clock (MHz) | 2,520 | 2,505 | 1,860 | 1,665 | 1,710 | |

| Peak FP32 TFLOPS | 82.6 | 48.7 | 40.0 | 34.1 | 30.6 | |

| RT cores | 128 | 76 | 84 | 80 | 70 | |

| RT TFLOPS | 191.0 | 112.7 | 78.1 | 66.6 | 59.9 | |

| Tensor cores | 512 | 304 | 336 | 320 | 280 | |

| ROPs | 176 | 112 | 112 | 112 | 96 | |

| Texture units | 512 | 304 | 336 | 320 | 280 | |

| Memory size (GB) | 24 | 16 | 24 | 12 | 12 | |

| Memory type | GDDR6X | GDDR6X | GDDR6X | GDDR6X | GDDR6X | |

| Memory bus (bits) | 384 | 256 | 384 | 384 | 384 | |

| Memory clock (Gbps) | 21 | 22.4 | 21 | 19 | 19 | |

| Bandwidth (GB/s) | 1,008 | 717 | 1,008 | 912 | 912 | |

| L1 cache (MB) | 16 | 9.5 | 10.5 | 10 | 8.8 | |

| L2 cache (MB) | 72 | 64 | 6 | 6 | 6 | |

| Power (watts) | 450 | 320 | 450 | 350 | 350 | |

| Launch MSRP ($) | 1,599 | 1,199 | 1,999 | 1,199 | 799 |

Leaving scope for a fabled RTX 4090 Ti, inaugural RTX 4090 disables a single GPC, enabling 128 of 144 possible SMs. Resulting figures of 16,384 CUDA cores, 128 RT cores and 512 Tensor cores remain mighty by comparison, and frequency headroom on the 4nm process is hugely impressive, with Nvidia specifying a 2.5GHz boost clock. The madness of Nvidia’s flagship is reflected in peak teraflops, which more than doubles from 40 on RTX 3090 Ti to an incredulous 82.6 on RTX 4090.

Front-end RTX 40 Series specifications are eye-opening, but the back end is noticeably less revolutionary, where a familiar 24GB of GDDR6X memory operates at 21Gbps, providing 1,008GB/s of bandwidth. The needle hasn’t moved – Nvidia has opted against Micron’s quicker 24Gbps chips – however the load on memory has softened with a significant bump in on-chip cache.

RTX 4090 carries 16MB of L1 and 72MB of L2. We’ve previously seen AMD attach as much as 128MB Infinity Cache on Radeon graphics cards, and though Nvidia doesn’t detail data rates or clock cycles, a greater than 5x increase in cache between generations reduces the need to routinely spool out to memory, raising performance and reducing latency.

Heard rumours of RTX 40 Series requiring a nuclear reactor to function? Such reports were wide of the mark. RTX 4090 maintains the same 450W TGP as RTX 3090 Ti, while RTX 4080 scales down to 320W. Almost 10 per cent lower than the last-generation x80 equivalent.

Shifting focus to the RTX 4080 launching today, it is instructive to know that Nvidia is not repurposing the same die across multiple high-end SKUs, as was the case with the previous generation. Instead, RTX 4080 calls upon newfound AD103 silicon but once again falls short of full implementation, featuring 76 of 80 possible active SMs.

Core count is cleaved by over 40 per cent from 16,384 on RTX 4090 to 9,728 on RTX 4080. RT and Tensor cores are slashed proportionally to 76 and 304, respectively, while onboard GDDR6X memory not only drops from 24GB to 16GB, bus width is also narrowed from 384 bits to just 256. Savage cuts on paper, and certain specifications intimate a sideward step alongside existing parts. You’ll have noticed RTX 4080 has fewer CUDA, RT and Tensor cores than RTX 3080 Ti, so what gives?

Nvidia has kept the door ajar for a potential RTX 4080 Ti with a full complement of 80 active SMs

In addition to a treasure chest of Ada Lovelace architecture optimisations, the 4nm process enables significantly higher clocks – RTX 4080 is binned to operate at effectively the same 2.5GHz as RTX 4090 – and such lofty frequencies ensure peak teraflops best the previous-generation champion, RTX 3090 Ti, to the tune of 22 per cent. Memory clock is also upped from 21Gbps on RTX 4090 to 22.4Gbps on RTX 4080, to help mitigate the tighter bus, and let’s not forget the 64MB of L2 cache, representing a generational increase of over 10x.

Leaving plenty of room to manoeuvre, Nvidia has kept the door ajar for a potential RTX 4080 Ti with a full complement of 80 active SMs, and as for the ill-fated RTX 4080 12GB, well, the less said the better. Word on the grapevine is the third-rung $899 GPU will eventually see the light of day under new guise as RTX 4070 Ti in 2023.

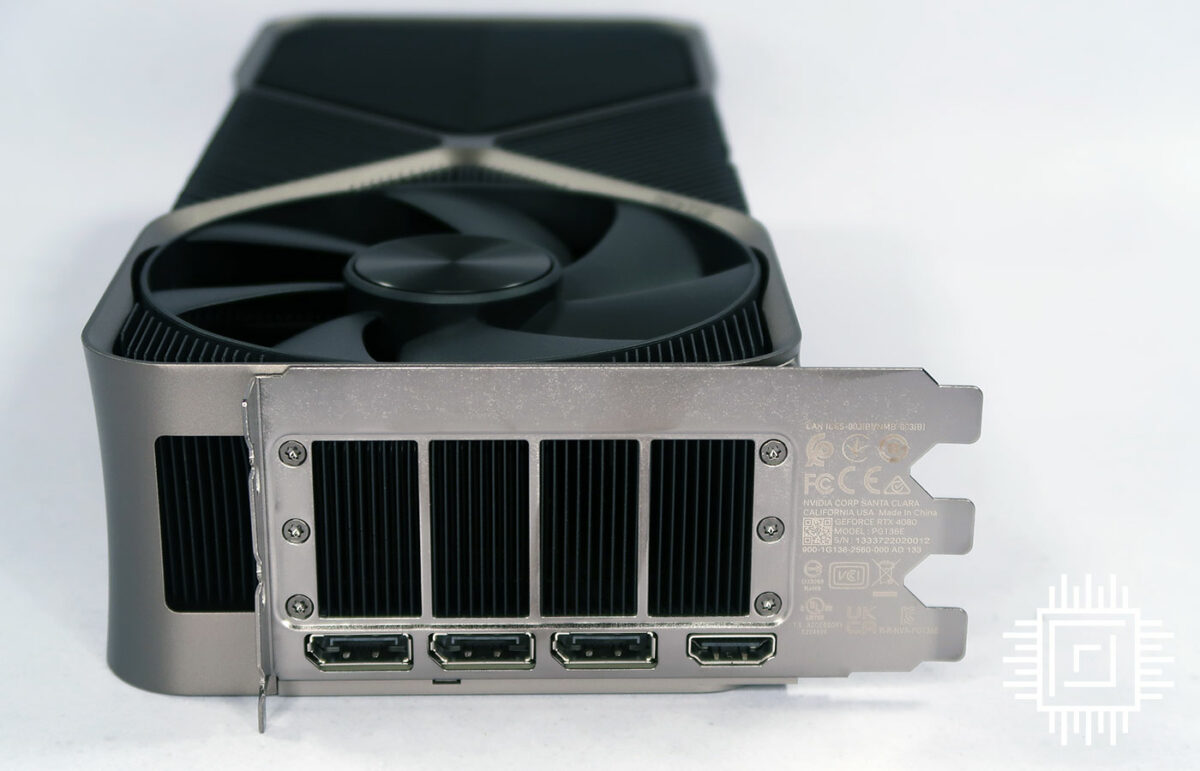

Outside of core specifications, display outputs remain tied to HDMI 2.1 and DisplayPort 1.4a – DisplayPort 2.0 hasn’t made the cut – and PCIe Gen 4 continues as the preferred interface. There’s no urgency to switch to Gen 5, says Nvidia, as even RTX 4090 can’t saturate the older standard. Finally, NVLink is conspicuous by its absence; SLI is nowhere to be seen on any RTX 40 Series product announced thus far, signalling multi-GPU setups are well and truly dead.

Ada Optimisations

While a shift to a smaller node affords more transistor firepower, such a move typically precludes sweeping changes to architecture. Optimisations and resourcefulness are order of the day, and the huge computational demands of raytracing are such that raw horsepower derived from a 3x increase in transistor budget isn’t enough; something else is needed, and Ada Lovelace brings a few neat tricks to the table.

Nvidia often refers to raytracing as a relatively new technology, stressing that good ol’ fashioned rasterisation has been through wave after wave of optimisation, and such refinement is actively being engineered for RT and Tensor cores. There’s plenty of opportunity where low-hanging fruit is yet to be picked.

Shader Execution Reordering

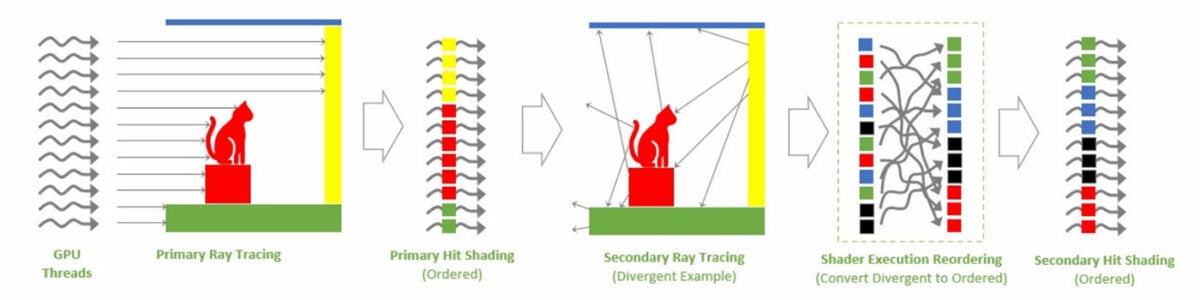

Shaders have been running efficiently for years, whereby one instruction is executed in parallel across multiple threads. You may know it as SIMT.

Raytracing, however, throws a spanner in those smooth works, as while pixels in a rasterised triangle lend themselves to running concurrently, keeping all lanes occupied, secondary rays are divergent by nature and the scattergun approach of hitting different areas of a scene leads to massive inefficiency through idle lanes.

Ada’s fix, dubbed Shader Execution Reordering (SER), is a new stage in the raytracing pipeline tasked with scanning individual rays on the fly and grouping them together. The result, going by Nvidia’s internal numbers, is a 2x improvement in raytracing performance in scenes with high levels of divergence.

Nvidia portentously claims SER is “as big an innovation as out-of-order execution was for CPUs.” A bold statement and there is a proviso in that Shader Execution Reordering is an extension of Microsoft’s DXR APIs, meaning it us up to developers to implement and optimise SER in games.

There’s no harm in having the tools, mind, and Nvidia is quickly discovering that what works for rasterisation can evidently be made to work for RT.

Upgraded RT Cores

In the rasterisation world, geometry bottlenecks are alleviated through mesh shaders. In a similar vein, displaced micro-meshes aim to echo such improvements in raytracing.

“The era of brute-force graphics rendering is over”

Bryan Catanzaro, Nvidia VP of applied deep learning research

With Ampere, the Bounding Volume Hierarchy (BVH) was forced to contain every single triangle in the scene, ready for the RT core to sample. Ada, in contrast, can evaluate meshes within the RT core, identifying a base triangle prior to tessellation in an effort to drastically reduce storage requirements.

A smaller, compressed BVH has the potential to enable greater detail in raytraced scenes with less impact on memory. Having to insert only the base triangles, BVH build times are improved by an order of magnitude and data sizes shrink significantly, helping reduce CPU overhead.

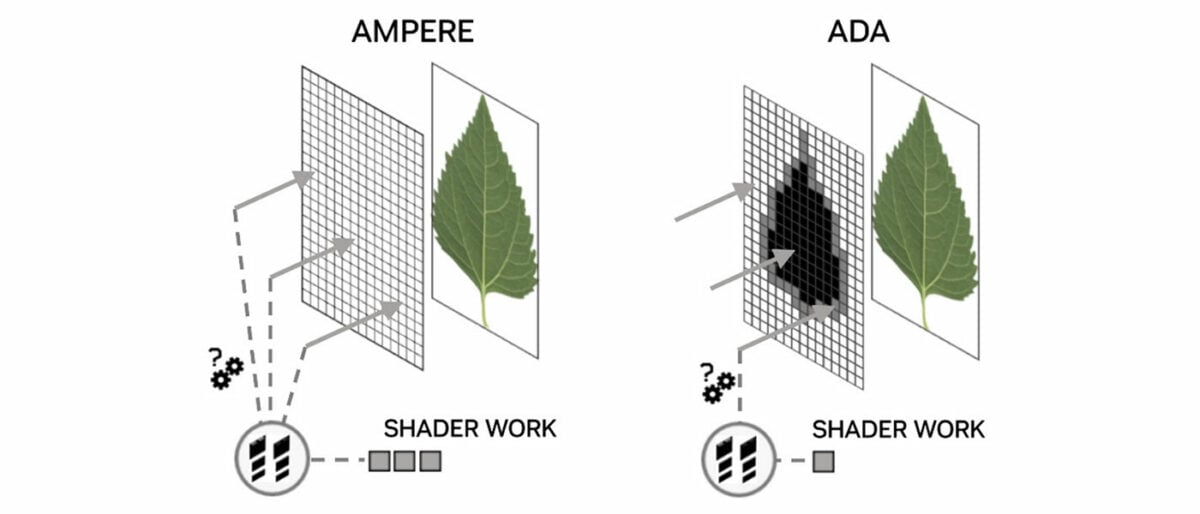

The sheer complexity of raytracing is such that eliminating unnecessary shader work has never been more important. To that end, an Opacity Micromap Engine has also been added to Ada’s RT core to reduce the amount of information going back and forth to shaders.

In the common leaf example, developers place the texture of foliage within a rectangle and use opaque polygons to determine the leaf’s position. A way to construct entire trees efficiently, yet with Ampere the RT core lacked this basic ability, with all rays passed back to the shader to determine which areas are opaque, transparent, or unknown. Ada’s Opacity Micromap Engine can identify all the opaque and transparent polygons without invoking any shader code, resulting in 2x faster alpha traversal performance in certain applications.

These two new hardware units make the third-generation RT core more capable than ever before – TFLOPS per RT core has risen by ~65 per cent between generations – yet all this isn’t enough to back up Nvidia’s claims of Ada Lovelace delivering up to 4x the performance of the previous generation. For that, Team Green continues to rely on AI.

DLSS 3

Since 2019, Deep Learning Super Sampling has played a vital role in GeForce GPU development. Nvidia’s commitment to the tech is best expressed by Bryan Catanzaro, VP of applied deep learning research, who states with no uncertainty that “the era of brute-force graphics rendering is over.”

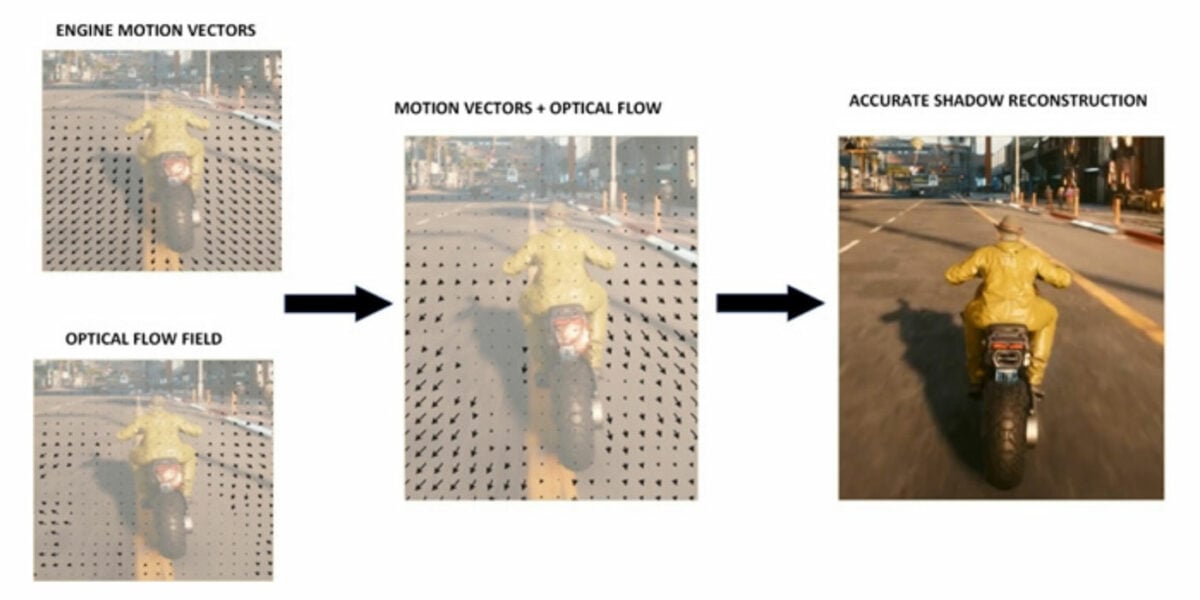

Third-generation DLSS, deemed a “total revolution in neural graphics,” expands upon DLSS Super Resolution’s AI-trained upscaling by employing optical flow estimation to generate entire frames. Through a combination of DLSS Super Resolution and DLSS Frame Generation, Nvidia reckons DLSS 3 can now reconstruct seven-eighths of a game’s total displayed pixels, to dramatically increase performance and smoothness.

Generating so much on-screen content without invoking the shader pipeline would have been unthinkable just a few years ago. It is a remarkable change of direction, but those magic extra frames aren’t conjured from thin air. DLSS 3 takes four inputs – two sequential in-game frames, an optical flow field and engine data such as motion vectors and depth buffers – to create and insert synthesised frames between working frames.

In order to capture the required information, Ada’s Optical Flow Accelerator is capable of up to 300 TeraOPS (TOPS) of optical-flow work, and that 2x speed increase over Ampere is viewed as vital in generating accurate frames without artifacts.

The real-world benefit of AI-generated frames is most keenly felt in games that are CPU bound, where DLSS Super Resolution can typically do little to help. Nvidia’s preferred example is Microsoft Flight Simulator, whose vast draw distances inevitably force a CPU bottleneck. Internal data suggests DLSS 3 Frame Generation can boost Flight Sim performance by as much as 2x.

Do note, also, that Frame Generation and Super Resolution can be implemented independently by developers. In an ideal world, gamers will have the choice of turning the former on/off, while being able to adjust the latter via a choice of quality settings.

More demanding AI workloads naturally warrant faster Tensor Cores, and Ada obliges by imbuing the FP8 Transformer Engine from HPC-optimised Hopper. Peak FP16 Tensor teraflops performance is already doubled from 320 on Ampere to 661 on Ada, but with added support for FP8, RTX 4090 can deliver a theoretical 1.3 petaflops of Tensor processing.

Plenty of bombast, yet won’t such processing result in an unwanted hike in latency? Such concerns are genuine; Nvidia has taken the decision to make Reflex a mandatory requirement for DLSS 3 implementation.

Designed to bypass the traditional render queue, Reflex synchronises CPU and GPU workloads for optimal responsiveness and up to a 2x reduction in latency. Ada optimisations and in particular Reflex are key in keeping DLSS 3 latency down to DLSS 2 levels, but as with so much that is DLSS related, success is predicated on the assumption developers will jump through the relevant hoops. In this case, Reflex markers must be added to code, allowing the game engine to feed back the data required to coordinate both CPU and GPU.

Given the often-sketchy state of PC game development, gamers are right to be cautious when the onus is placed in the hands of devs, and there is another caveat in that DLSS tech is becoming increasingly fragmented between generations.

DLSS 3 now represents a superset of three core technologies: Frame Generation (exclusive to RTX 40 Series), Super Resolution (RTX 20/30/40 Series), and Reflex (any GeForce GPU since the 900 Series). Nvidia has no immediate plans to backport Frame Generation to slower Ampere cards.

8th Generation NVENC

Last but not least, Ada Lovelace is wise not to overlook the soaring popularity of game streaming, both during and after the pandemic.

Building upon Ampere’s support for AV1 decoding, Ada adds hardware encoding, improving H.264 efficiency to the tune of 40 per cent. This, says Nvidia, allows streamers to bump their stream resolution to 1440p while maintaining the same bitrate.

AV1 support also bodes well for professional apps – DaVinci Resolve is among the first to announce compatibility – and Nvidia extends this potential on high-end RTX 40 Series GPUs by ensuring both launch models include dual 8th Gen NVENC encoders (enabling 8K60 capture and 2x quicker exports) as well as a 5th Gen NVDEC decoder as standard.

Super Size FE

Nvidia add-in board partners hoping for a little breathing room are set for disappointment. RTX 4080 12GB – formerly an AIB-only SKU – has been canned, meaning any custom 40 Series part has the tricky task of competing against Nvidia’s own Founders Edition.

A formidable task as the GeForce manufacturer has doubled down on ensuring the reference design is more ambitious than ever. RTX 4080, like RTX 4090 before it, is an astonishingly thick card occupying a full three slots and measuring 304mm x 137mm x 61mm.

It’s a monster alright, yet after the initial shock has worn off, there are upsides to the gargantuan design. Nvidia takes advantage of a broader waistline by incorporating a new vapour chamber, improved heatpipe configuration, larger fans touting a 20 per cent increase in airflow, and particular emphasis on memory cooling.

GDDR6X ran notoriously hot on last-gen cards, and Nvidia has tackled that issue in two ways. Firstly, the Micron chips are now built on a smaller, more efficient node. Secondly, all memory now resides on one side of the PCB for more effective cooling. How effective? Nvidia claims a 10°C reduction in GDDR6X temperature when gaming.

As expected, power is sourced from a single 16-pin 12VHPWR connector offering compatibility with ATX 3.0 power supplies. The port hasn’t been without controversy – Nvidia is reportedly still investigating instances of melting parts – but as a general rule of thumb, try not to bend cables at extreme angles, and ensure the connector is fully inserted. Note that RTX 4080 ships with a three-way, eight-pin-to-12VHPWR adapter as part of the bundle, and yep, keeping those cables looking tidy is nigh-on impossible.

RTX 4080, like its bigger brother, is pitched as a heavyweight performer – the Founders Edition tips the scales at 2,135g – yet there are many ways to contemplate AD103’s place in the market. Nvidia’s compact PCB looks sparse with just the eight 2GB GDDR6X memory chips surrounding the relatively small GPU, and power phases have halved compared to flagship RTX 4090. This, clearly, could have been a much smaller product, perhaps even suited to small-form-factor setups.

There’s also a long-running debate over GPU pricing. Nvidia will point out that 4nm wafers don’t come cheap, and nobody can ignore the fact that multiple major economies teeter on the brink of recession, yet gamers who have relied on x80 series solutions over the years will have witnessed a dramatic shift in pricing. GeForce GTX 1080, RTX 2080 and RTX 3080 all had variants starting at $699. RTX 4080 coming in at $1,199 will be viewed as galling by those expecting to upgrade for less, but Nvidia evidently feels bullish about its prospects.

That mood may change if AMD’s upcoming Radeon RX 7900 XTX delivers the requisite punch at $999, yet even so, Nvidia’s expected lead in raytracing and DLSS suggests RTX 4080 will go unmoved. Rather, given excess stock in the channel, existing RTX 30 Series parts will remain as sub-$1,000 alternatives for the time being.

Circling back to partners being bullied into a tight spot, the Founders Edition, at $1,199, presents something of a pickle for premium custom coolers. There’s no room for, say, a liquid-cooled RTX 4080, as pricing would inevitably creep toward RTX 4090, which is a vastly superior product.

Performance

Our 5950X Test PCs

Club386 carefully chooses each component in a test bench to best suit the review at hand. When you view our benchmarks, you’re not just getting an opinion, but the results of rigorous testing carried out using hardware we trust.

Shop Club386 test platform components:

CPU: AMD Ryzen 9 5950X

Motherboard: Asus ROG X570 Crosshair VIII Formula

Cooler: Corsair Hydro Series H150i Pro RGB

Memory: 32GB G.Skill Trident Z Neo DDR4

Storage: 2TB Corsair MP600 SSD

PSU: be quiet! Straight Power 11 Platinum 1300W

Chassis: Fractal Design Define 7 Clear TG

Our trusty test platforms have been working overtime these past few months, and though the PCIe slot is starting to look worse for wear, the AM4 rigs haven’t skipped a beat. RTX 4080 locked and loaded.

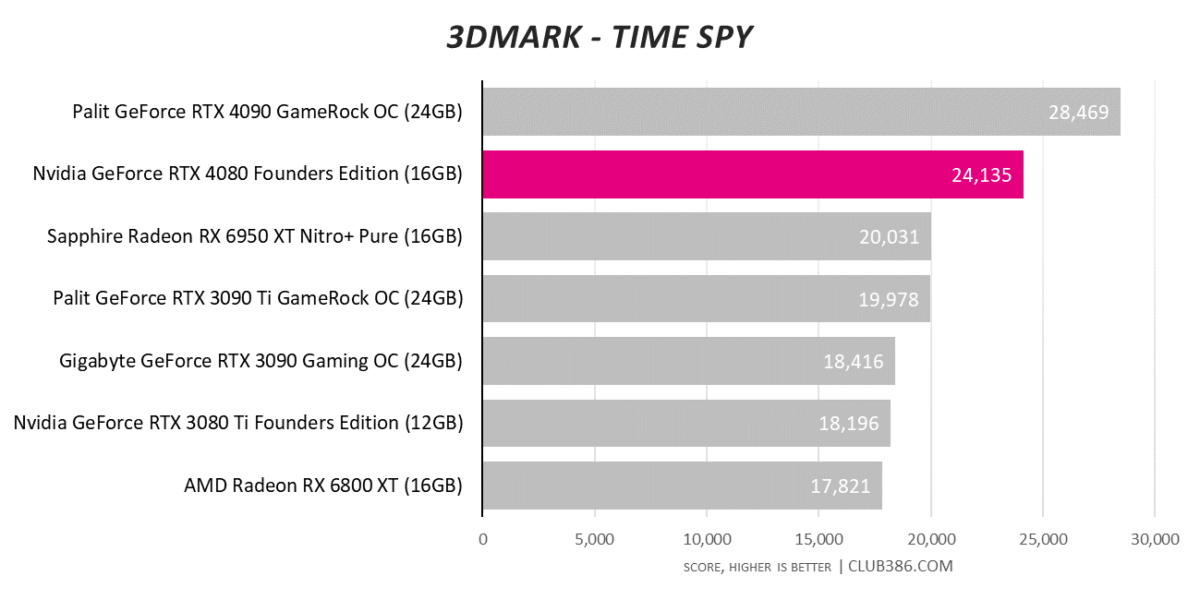

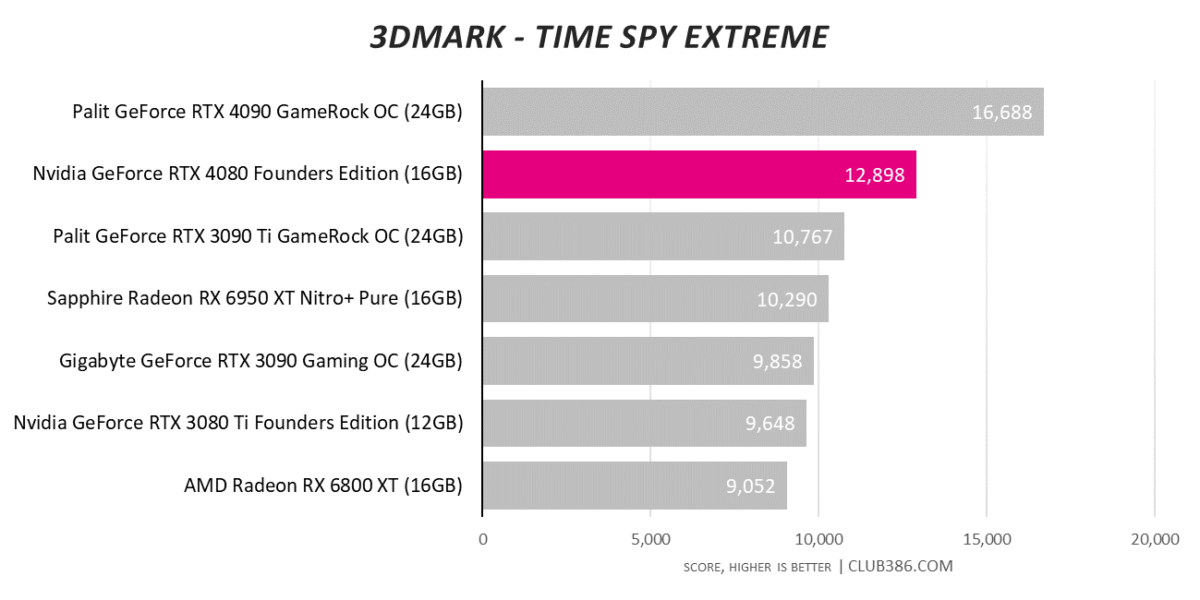

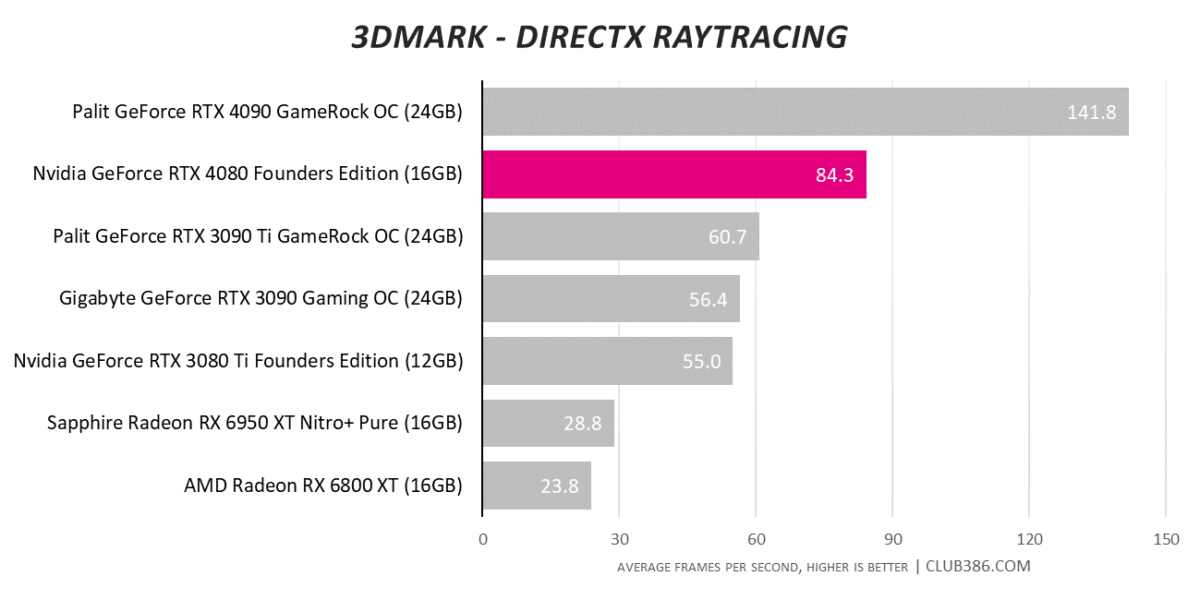

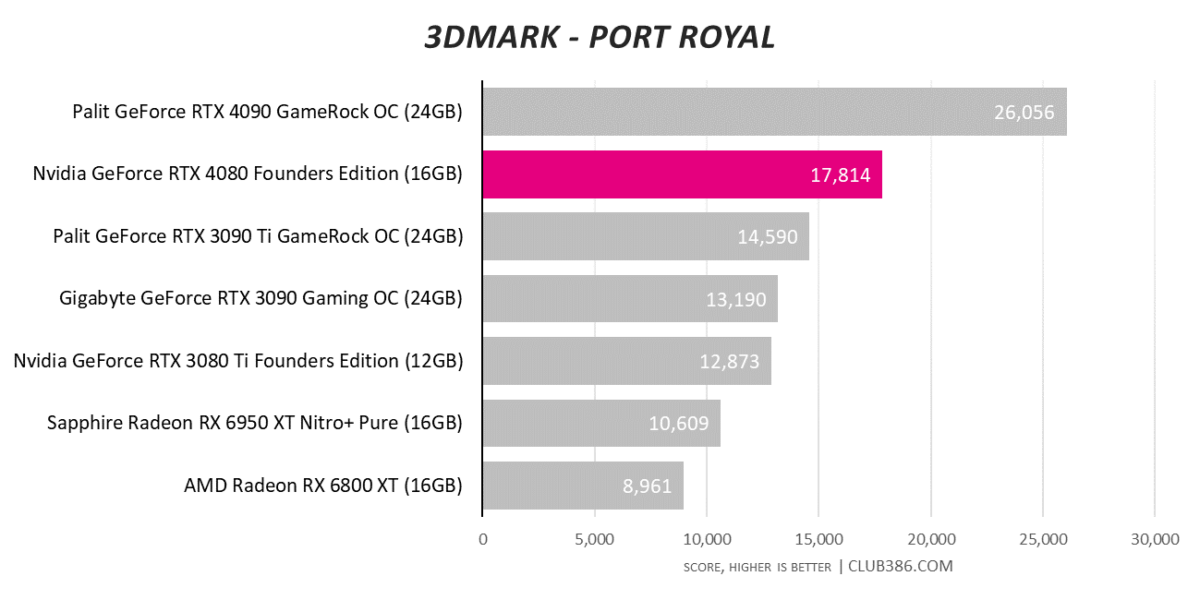

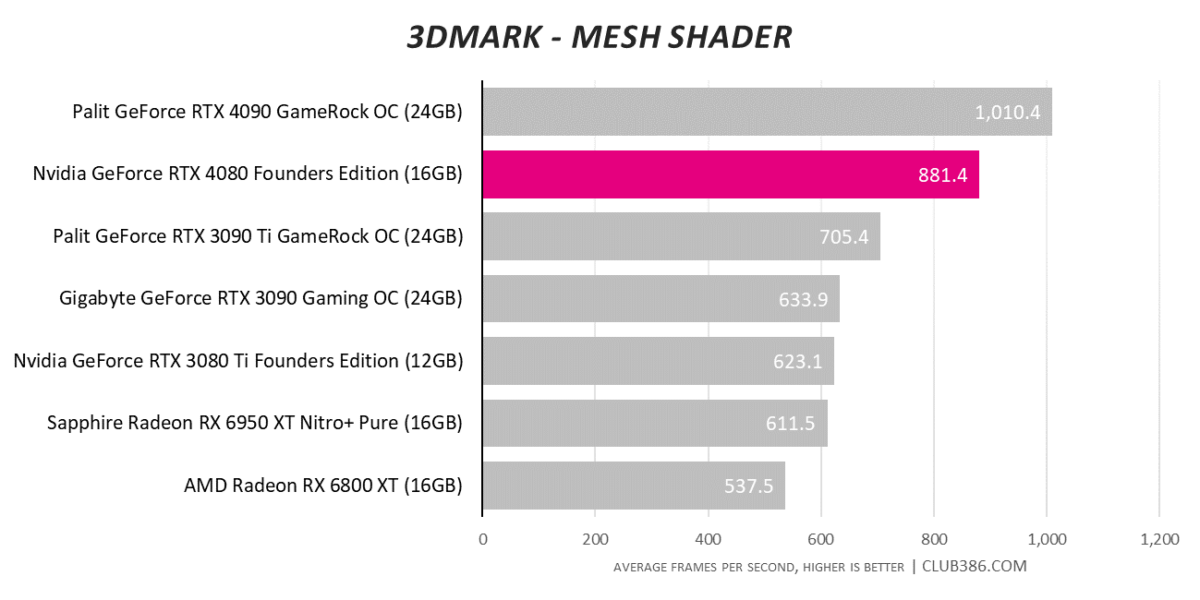

Slotting in as expected, second-rung GeForce is up to 23 per cent slower than RTX 4090 in the synthetic tests and up to 33 per cent quicker than price-comparable RTX 3080 Ti. A solid start.

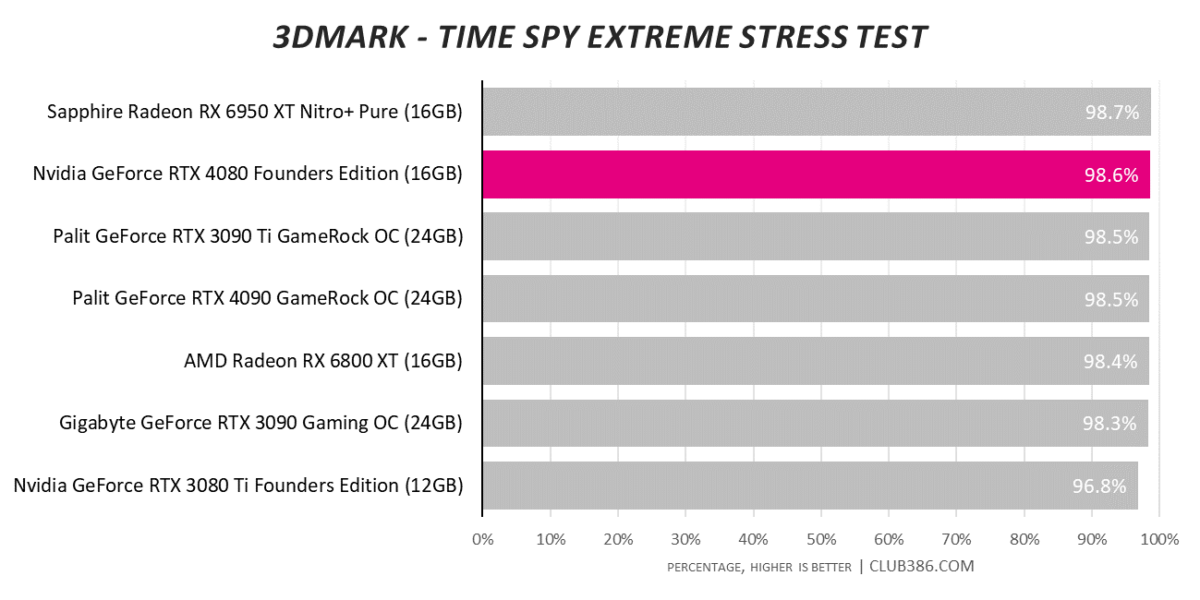

We’ve alluded to the composed nature of the Founders Edition board and that sentiment is reflected in the Stress Test. Framerate consistency is excellent with the card rarely straying from a real-world clock speed of 2.7GHz.

The gap between RTX 4090 and RTX 4080 will inevitably be more pronounced in certain scenarios. A 40 per cent reduction in RT cores is reflected in the 3DMark DirectX Raytracing test. Get used to seeing RTX 4080 in second place.

RTX 4090 is in another league entirely, yet RTX 4080 has no trouble speeding past everything from the previous generation. We can’t wait to see where RDNA 3 fits in.

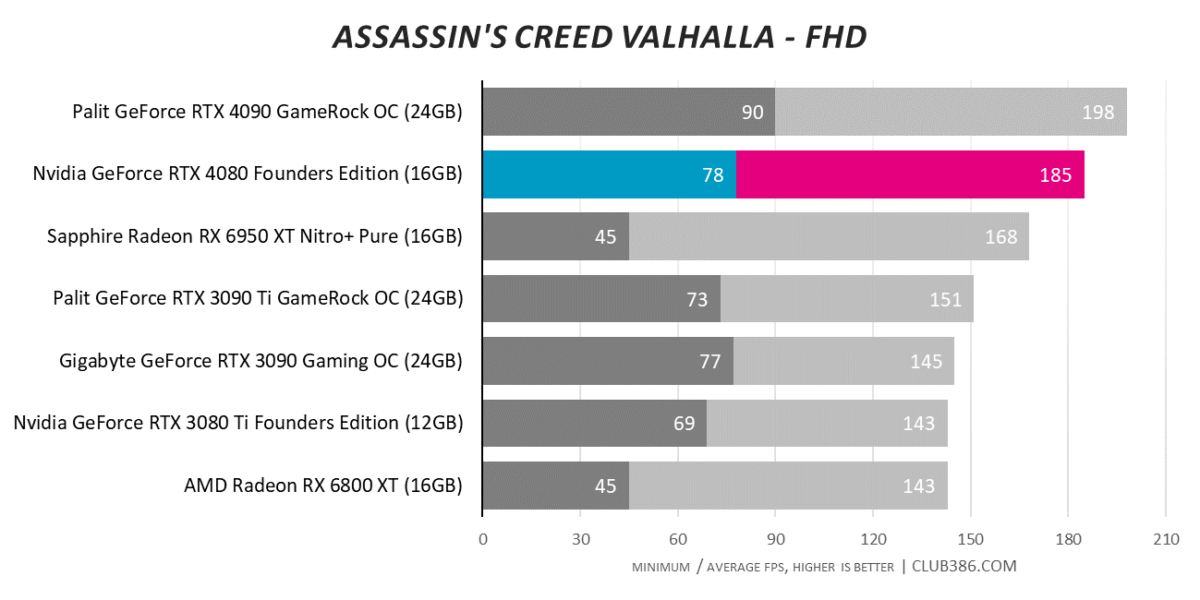

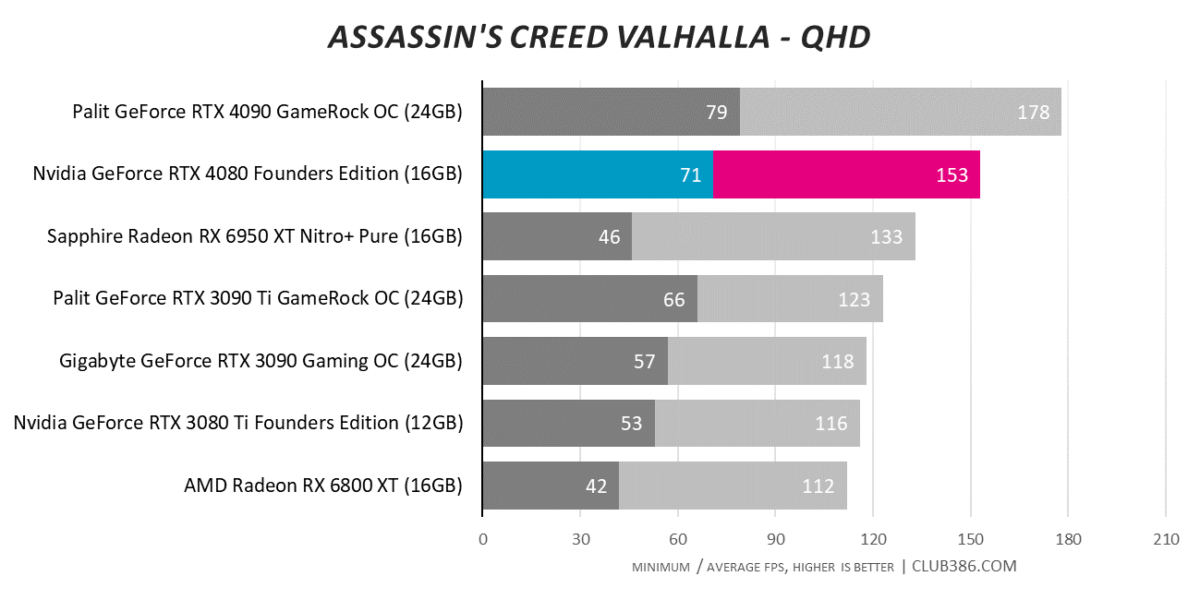

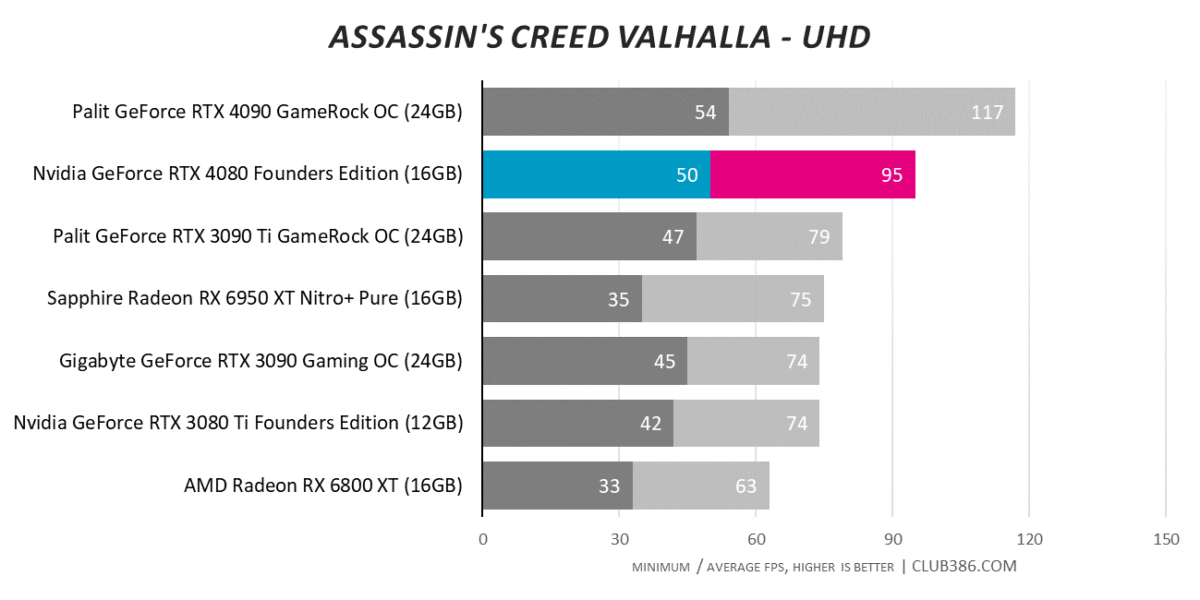

Assassin’s Creed Valhalla

Our first game, Assassin’s Creed Valhalla, shows RTX 3090 Ti and Radeon RX 6950 XT – heavy hitters from the prior generation – are beaten by up to 20 per cent at the highest resolution.

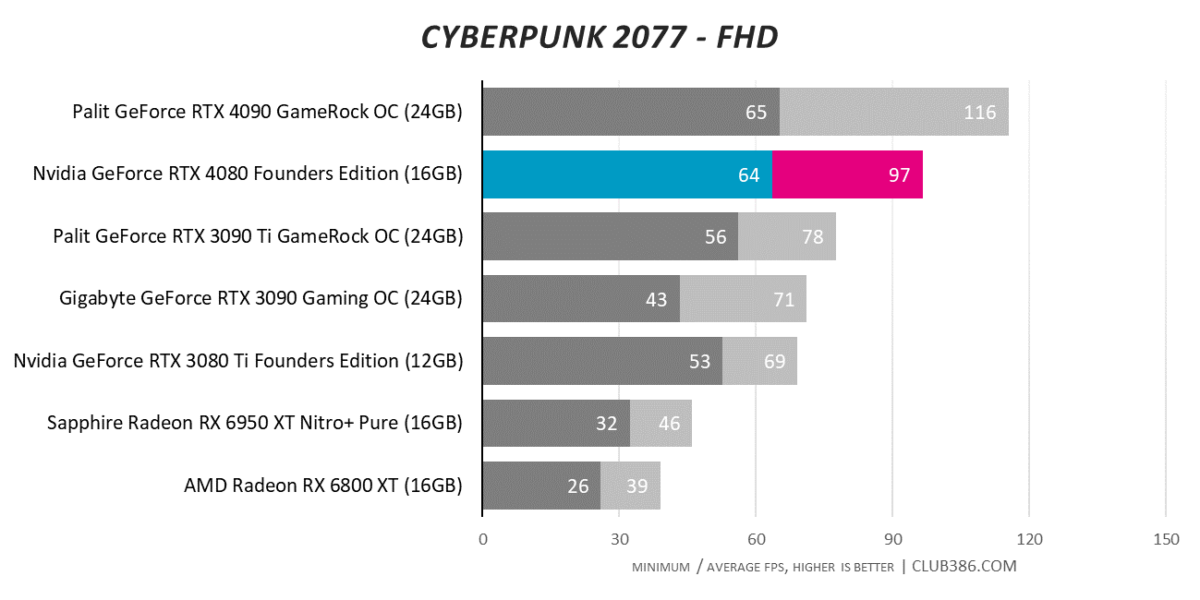

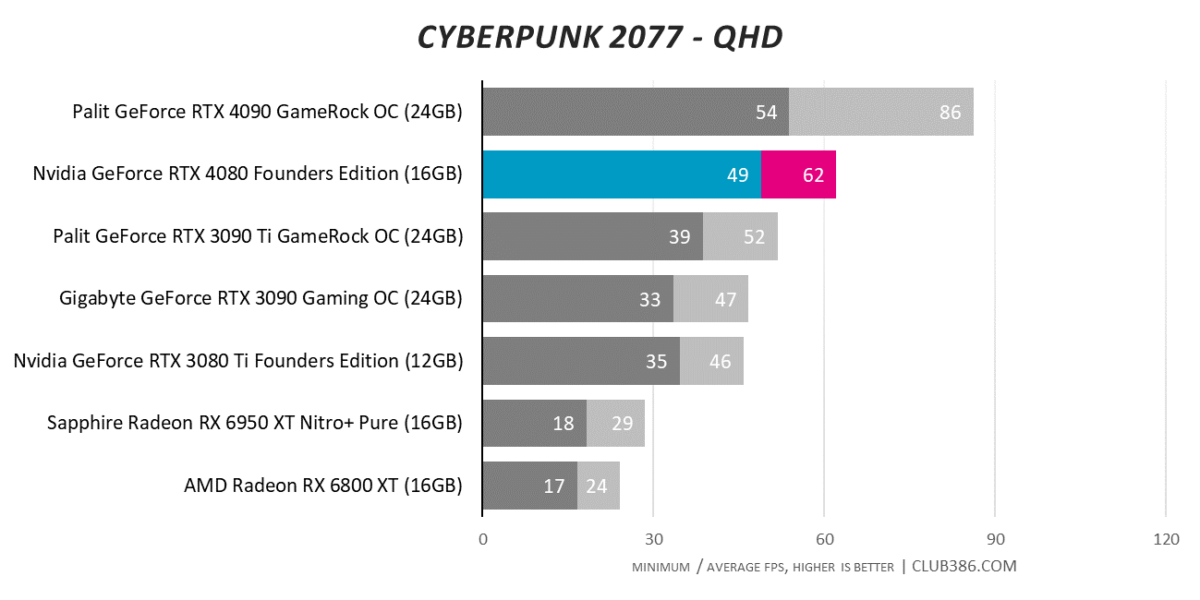

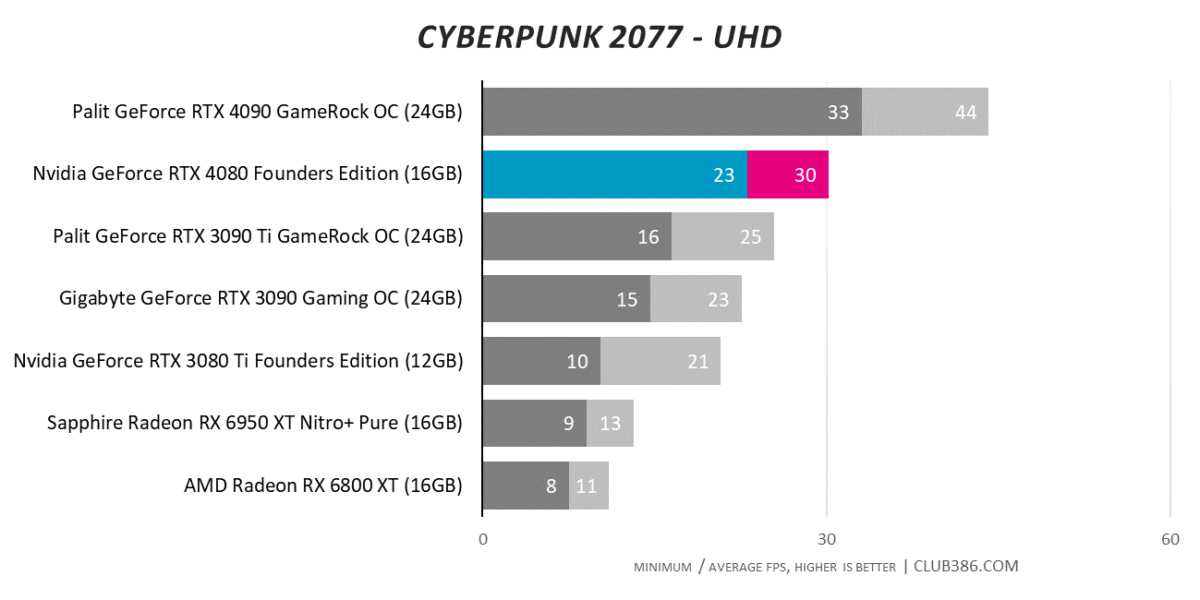

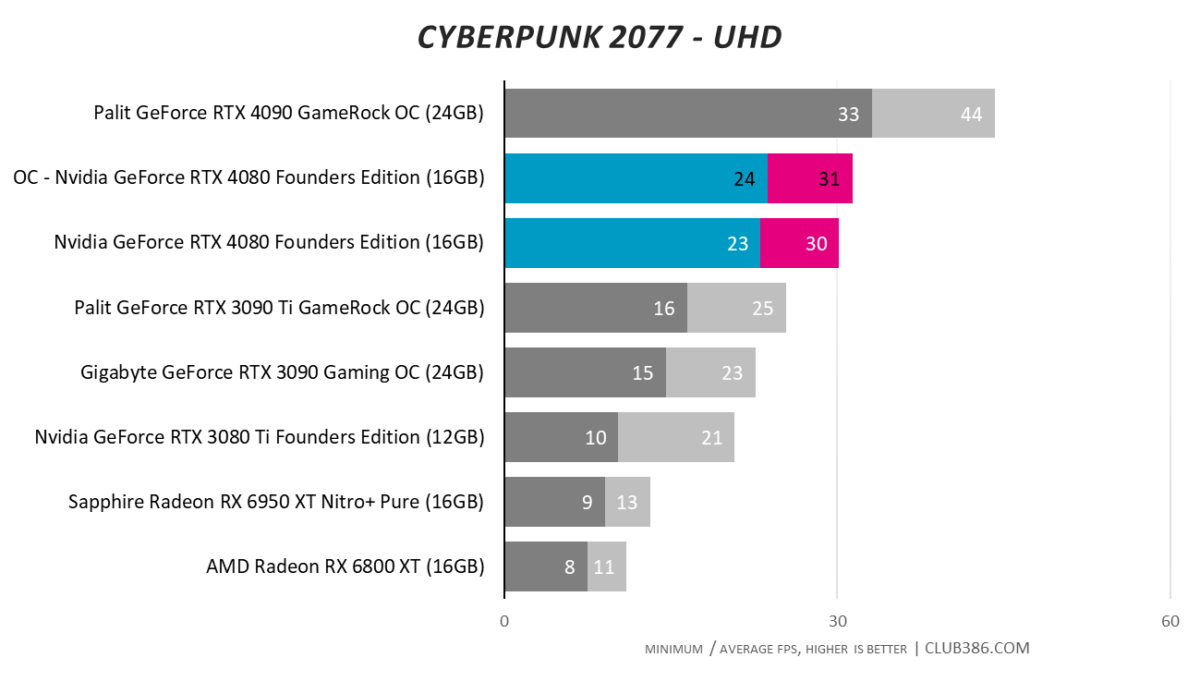

Cyberpunk 2077

The big challenge for rival Radeons is catching up when raytracing is cranked to ultra. RTX 4080 offers more than double the performance of Radeon RX 6950 XT across every resolution.

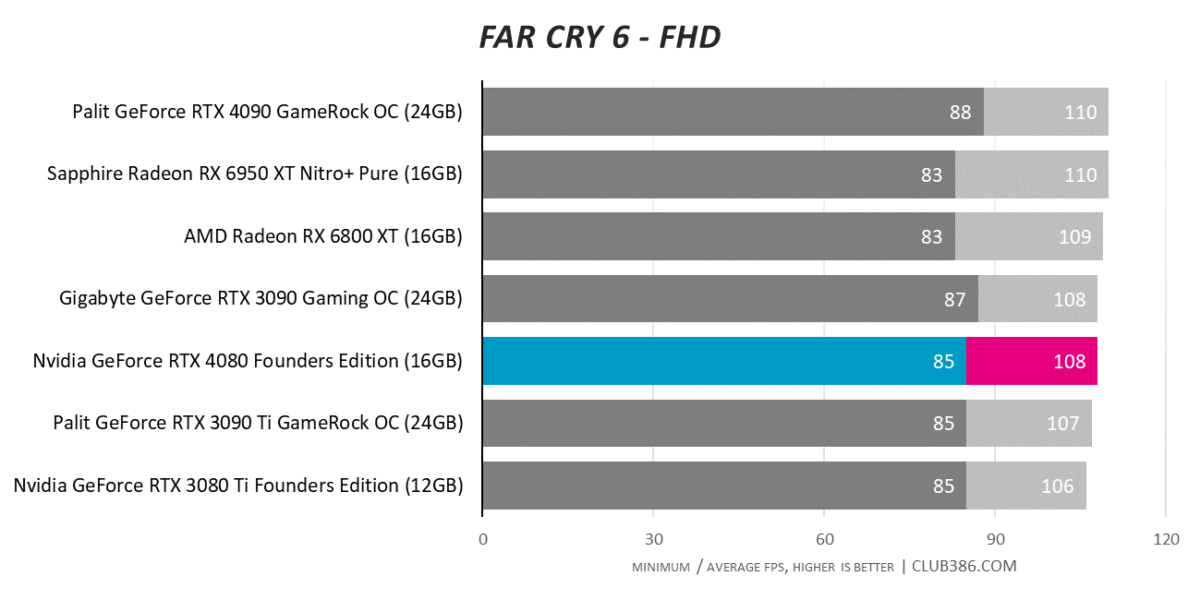

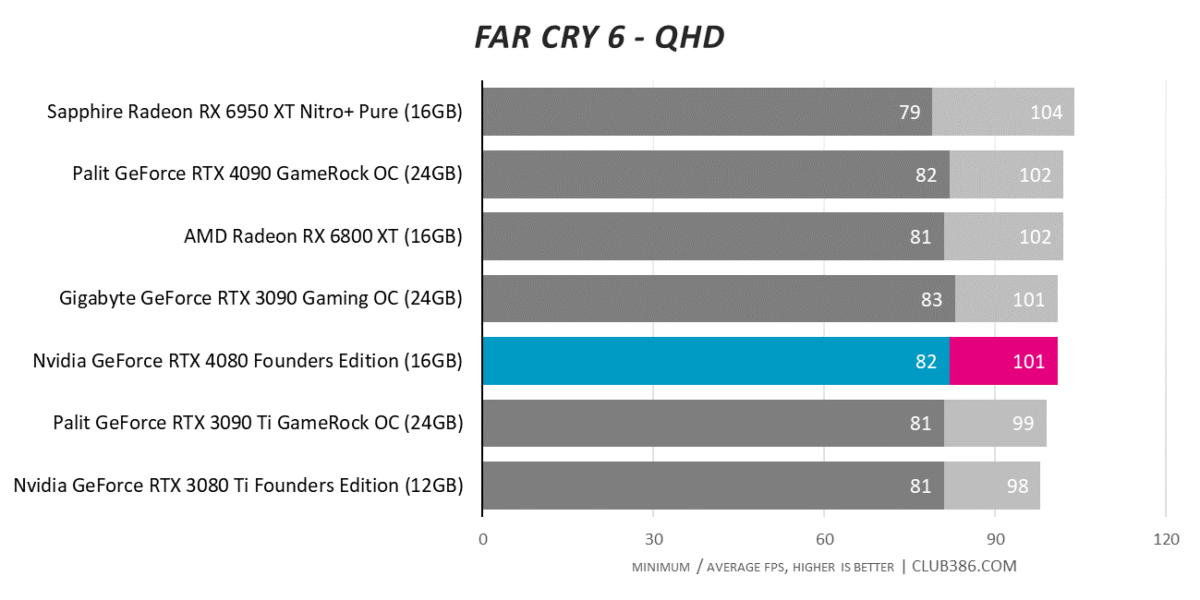

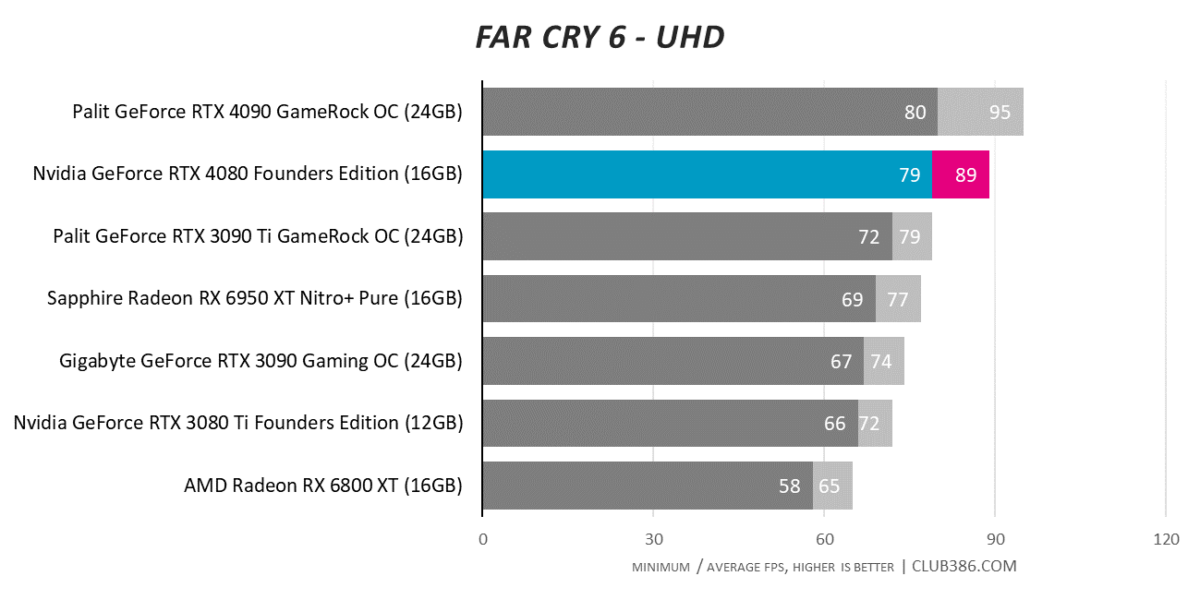

Far Cry 6

You have to play Far Cry 6 at a 4K UHD resolution to separate the very best GPUs. RTX 4080 beats RTX 3080 Ti by 24 per cent, but let’s not forget, you don’t need to spend this sort of money to get 4K60 performance in most titles.

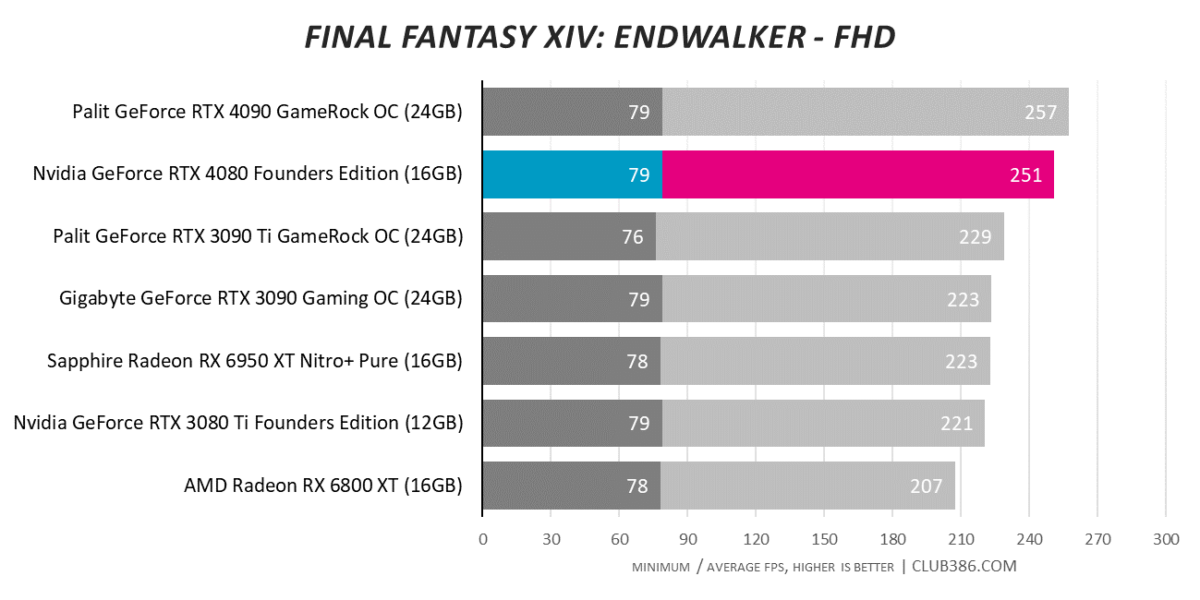

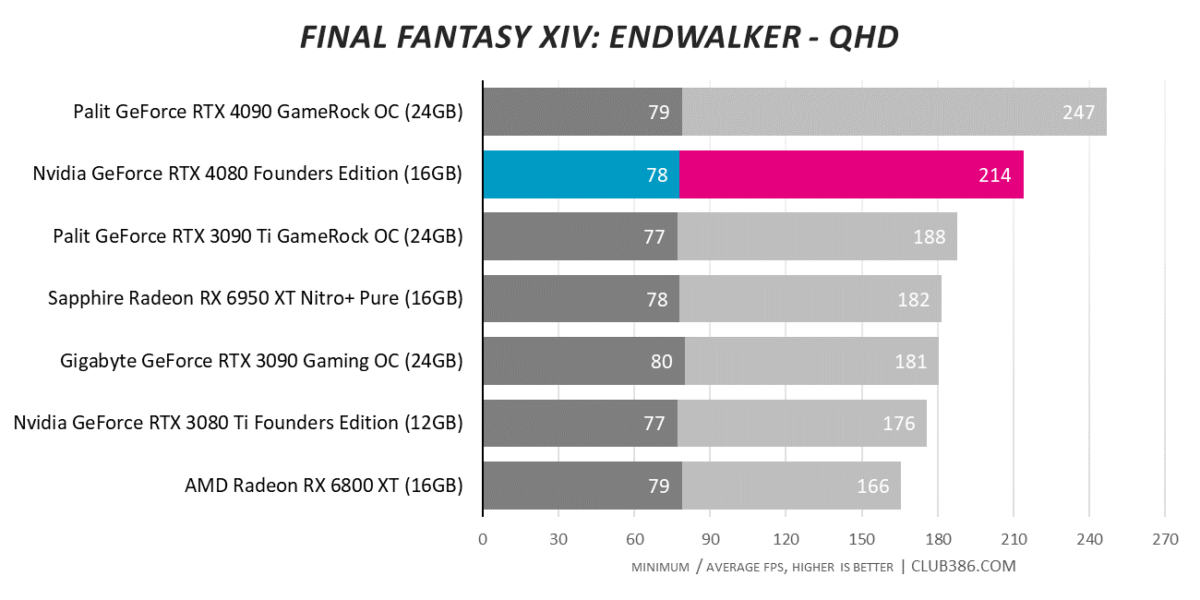

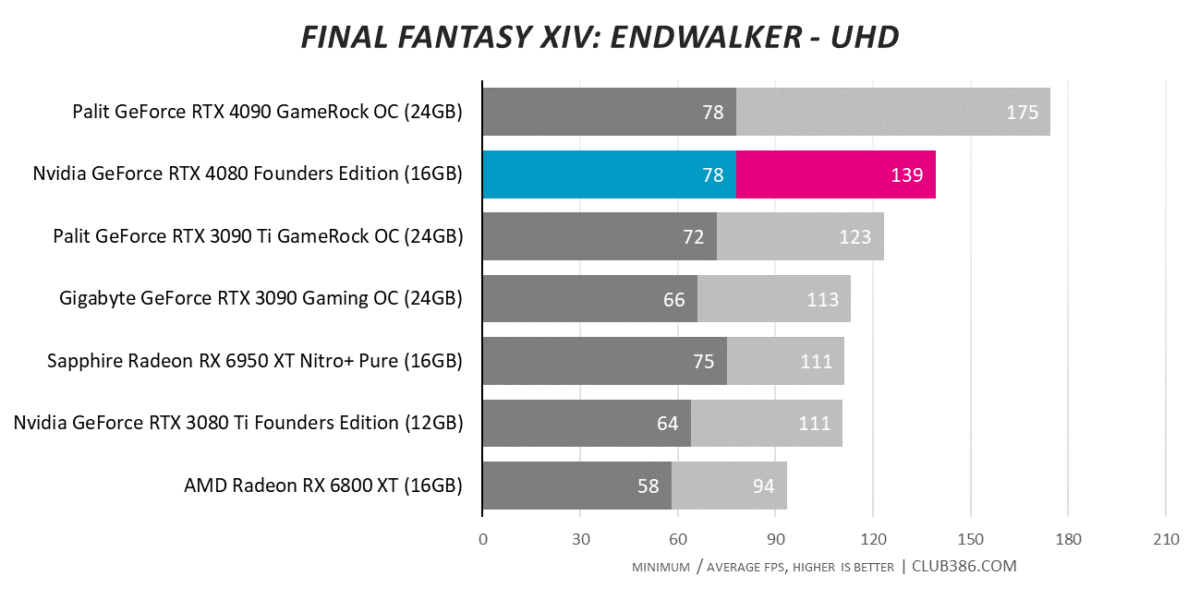

Final Fantasy XIV: Endwalker

We appreciate the free-to-use Final Fantasy benchmark as a gauge for pure rasterisation performance. RTX 4090 continues to stand apart, but is RTX 4080 doing enough to justify a £1,269 fee on these here shores? That’ll be argued ’til the cows come home.

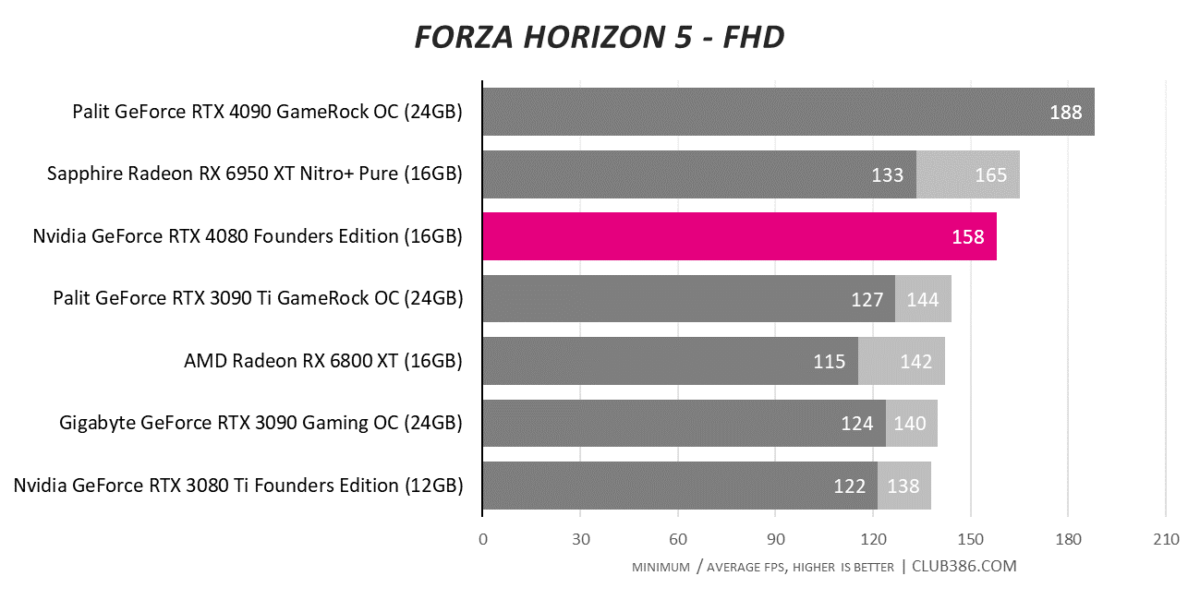

Forza Horizon 5

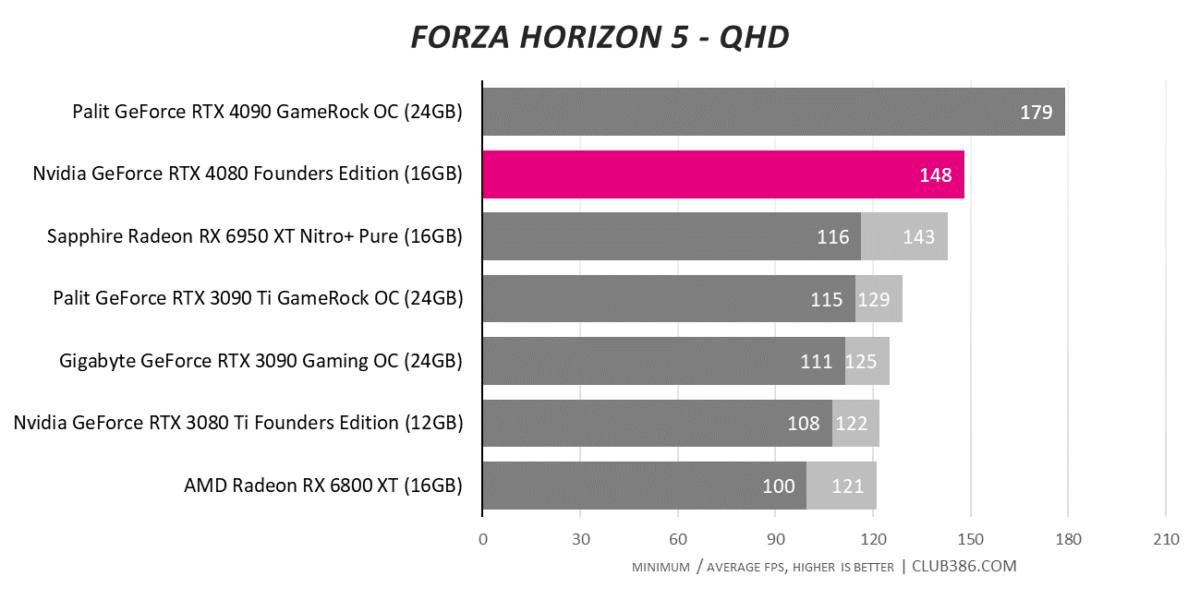

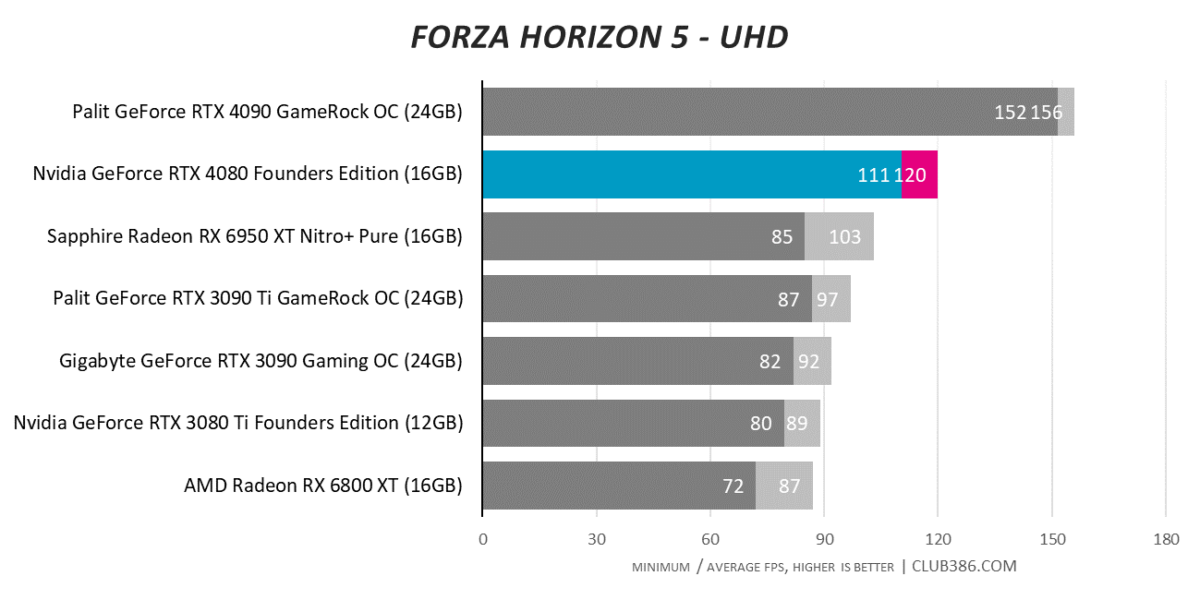

Forza Horizon 5 runs particularly well on Radeon hardware, so much so that Radeon RX 6950 XT and GeForce RTX 4080 are only really separable at the most demanding 4K resolution. Why is no minimum framerate listed at FHD and QHD? Put simply, the minimum GPU framerate is higher than the average; you’ll want a faster CPU to prevent any such bottleneck.

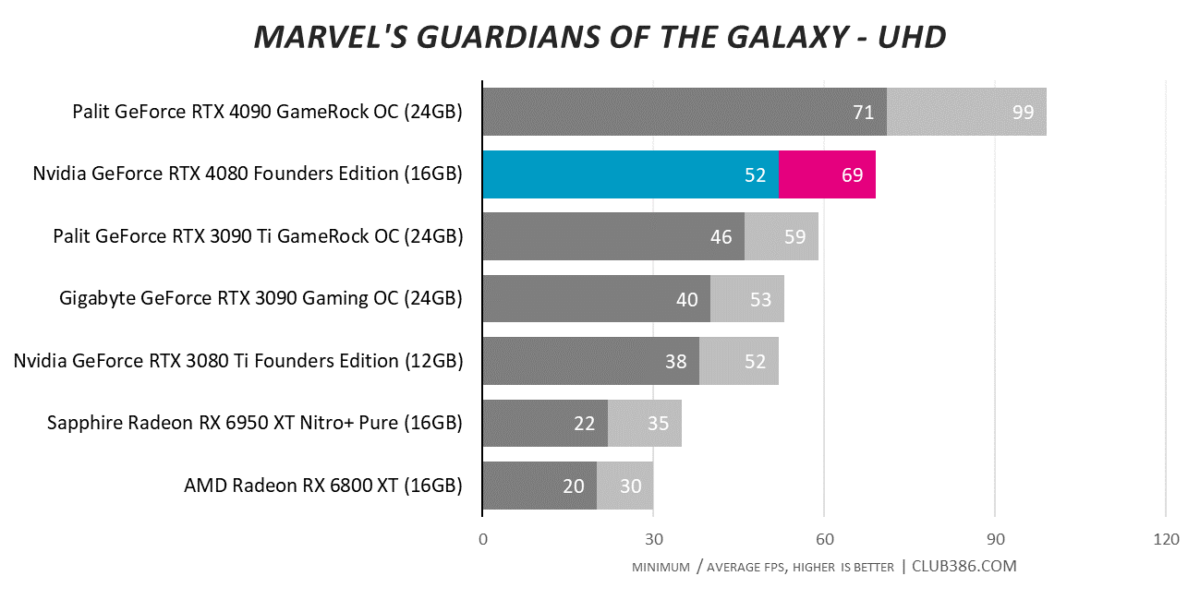

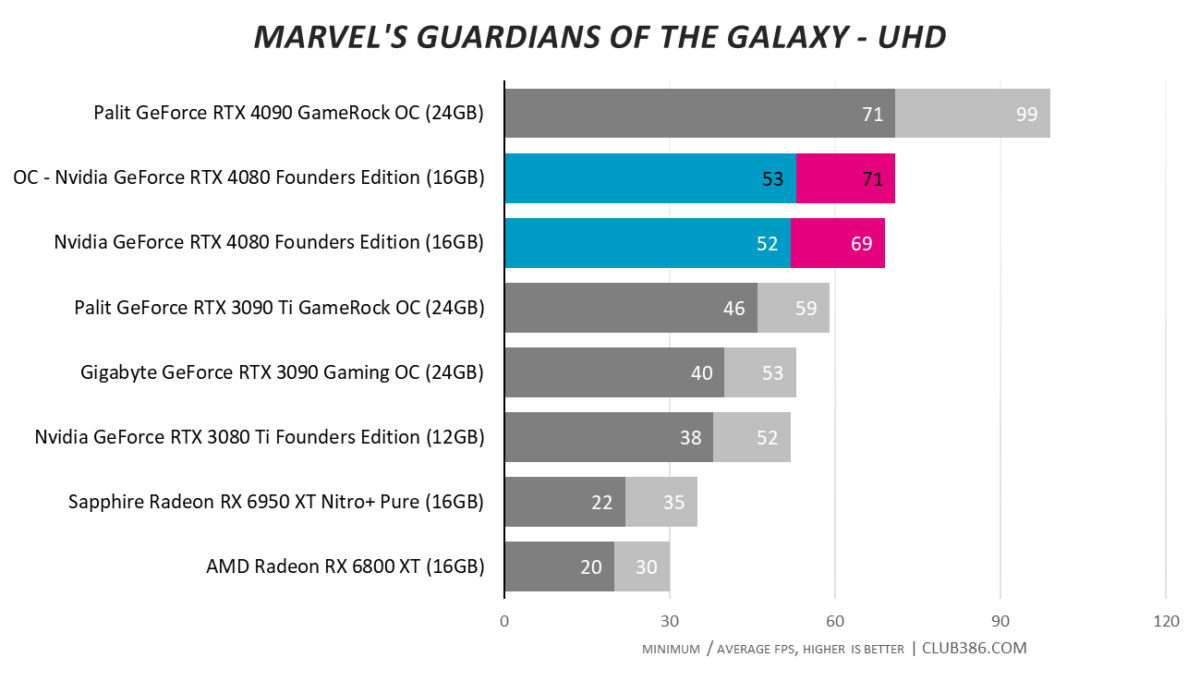

Marvel’s Guardians of the Galaxy

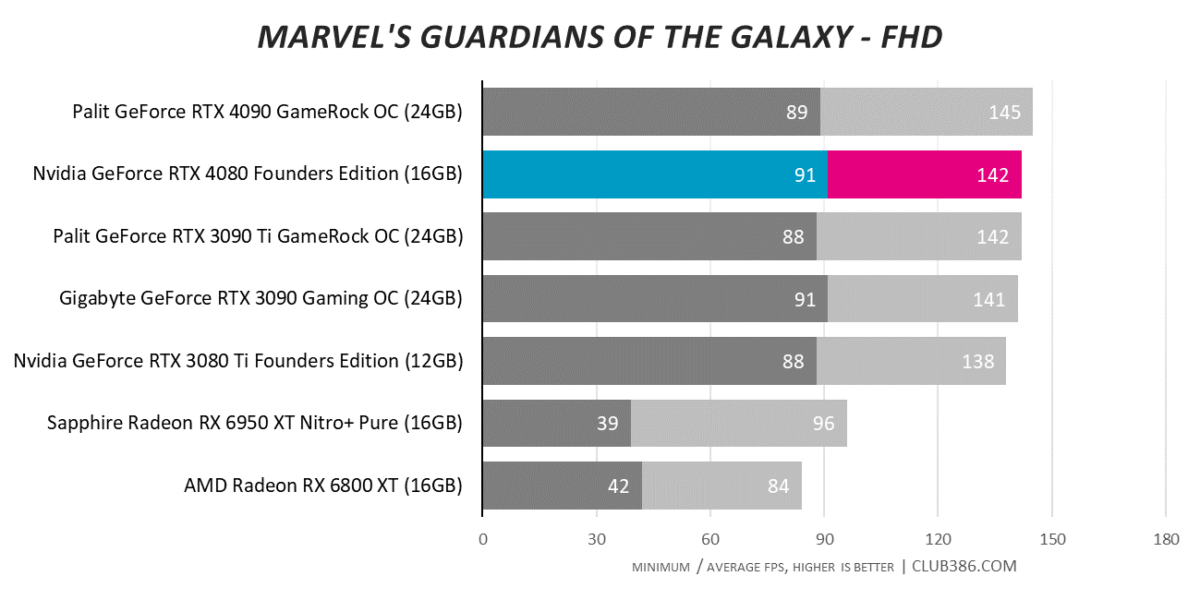

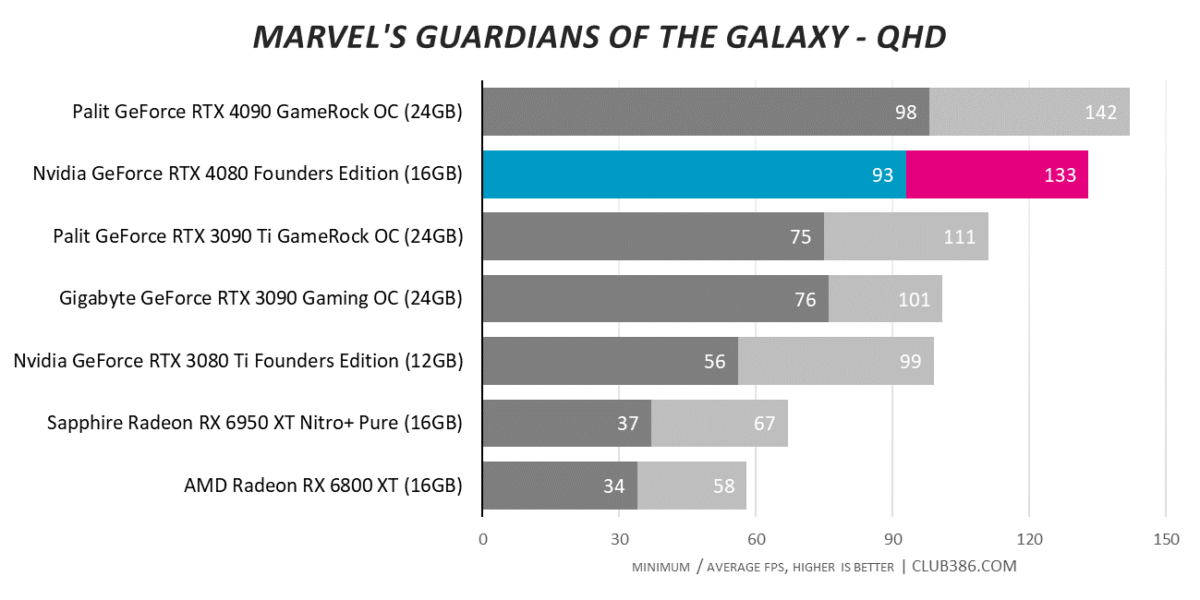

2K120 or 4K60? Either way, RTX 4080 can make it happen in Marvel’s Guardians of the Galaxy while all previous-gen cards fall short. The gap between top two is begging to be filled by RTX 4080 Ti or, who knows, Radeon RX 7900 XTX.

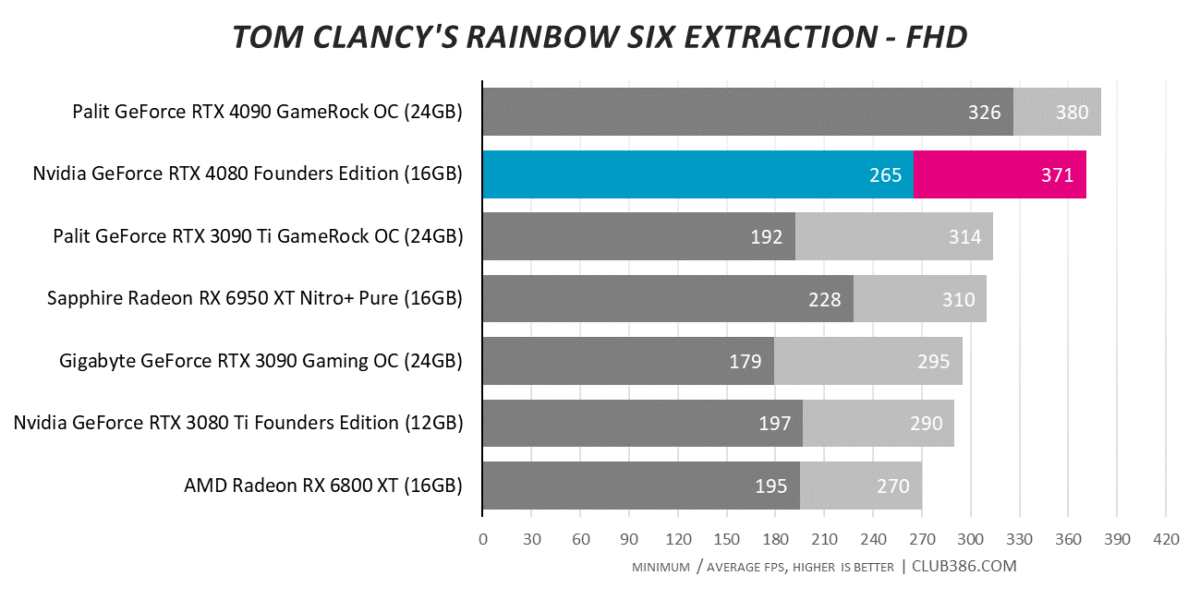

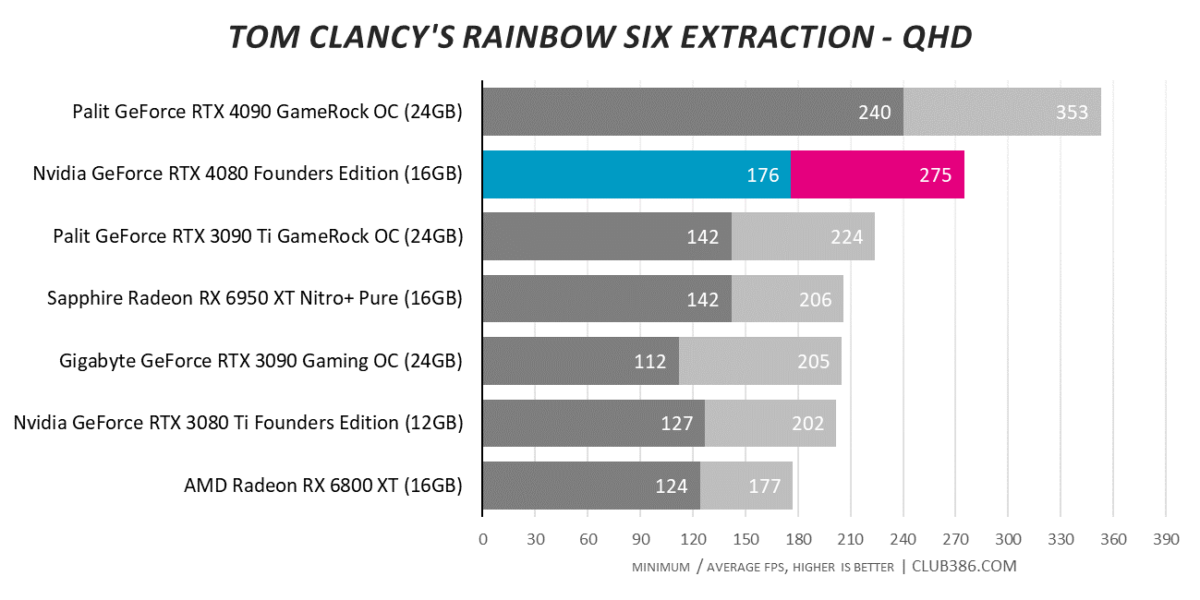

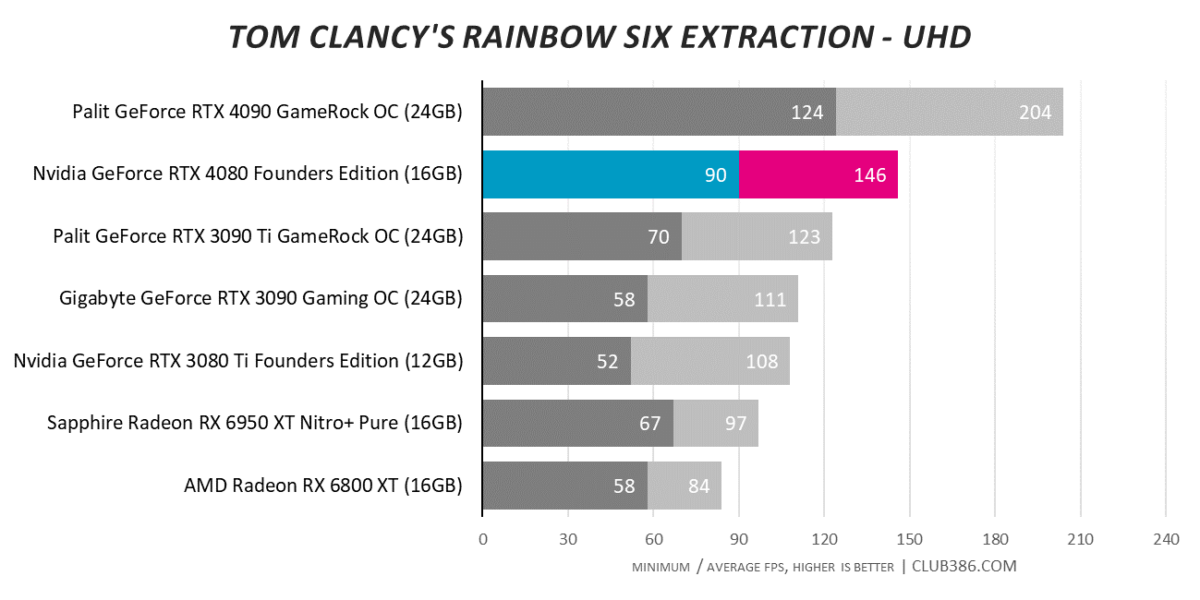

Tom Clancy’s Rainbow Six Extraction

RTX 4080 has the unenviable task of following in RTX 4090’s rather astonishing footsteps. Still, 35 per cent quicker than RTX 3080 Ti is about right.

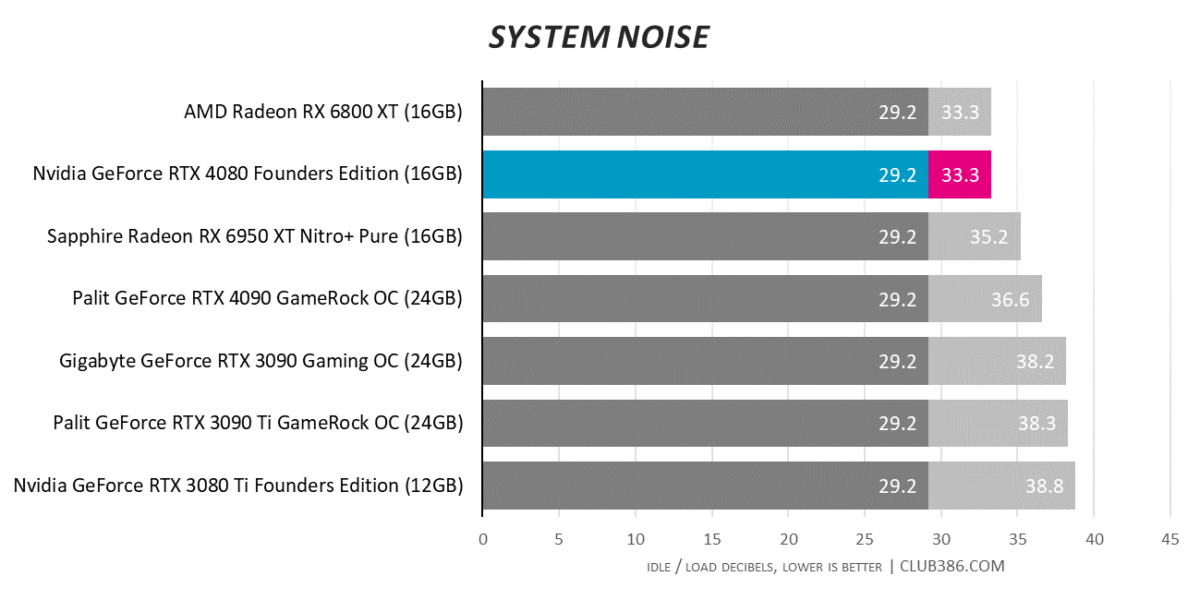

Relative Performance, Efficiency, Temps and Noise

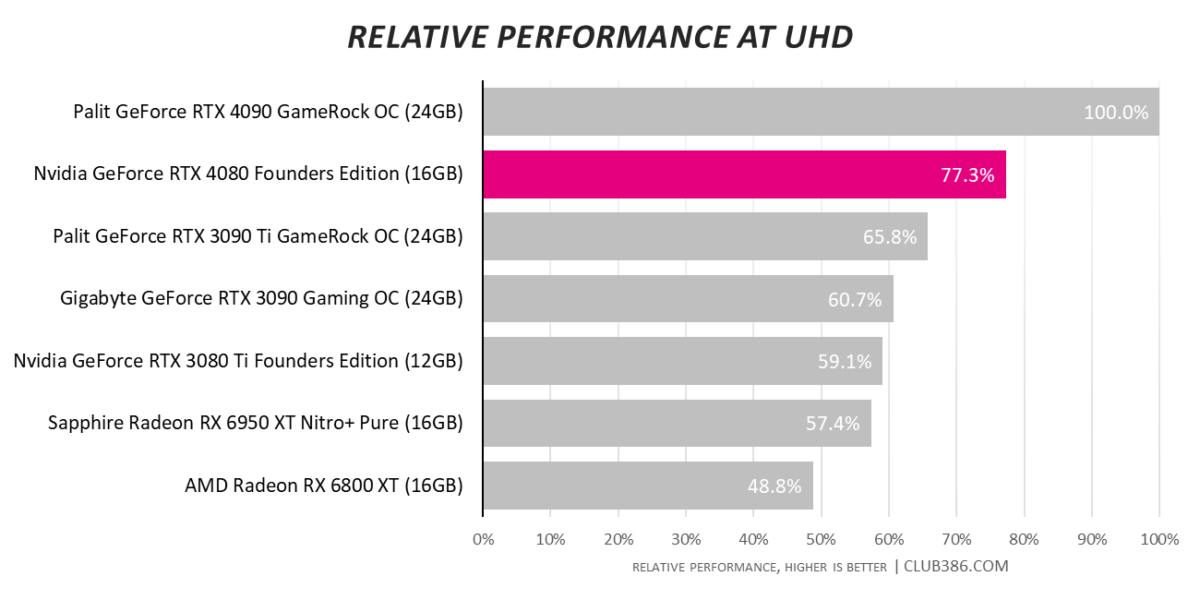

Looking at aggregate in-game framerates at 4K resolution serve up no major surprises. RTX 4080 offers 77 per cent relative performance of RTX 4090. Convenient, given that it costs 75 per cent as much.

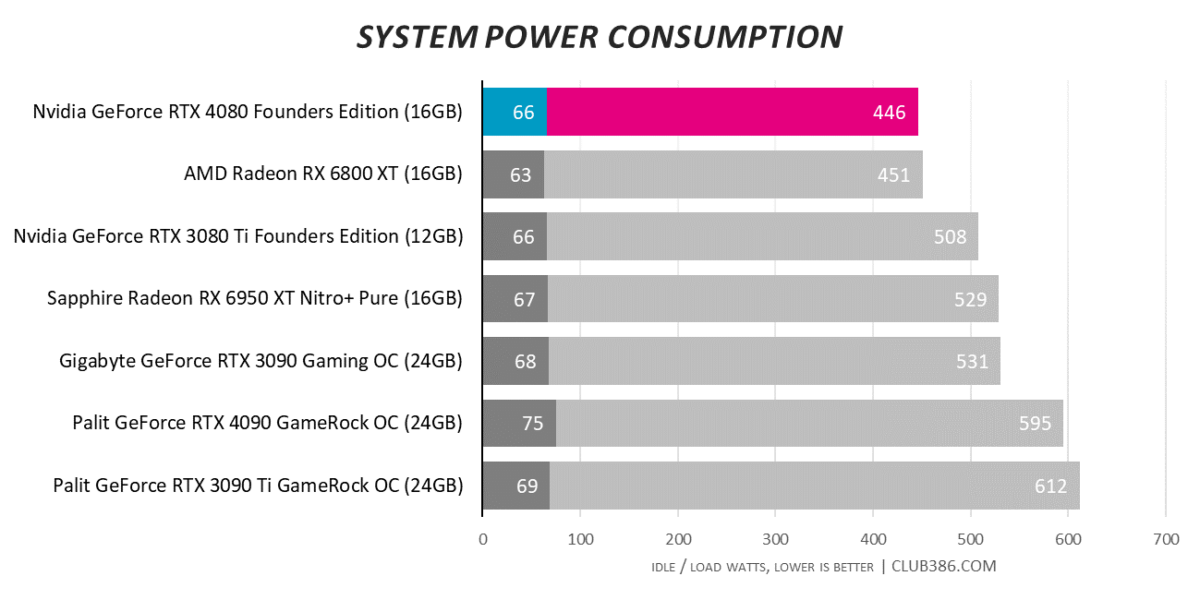

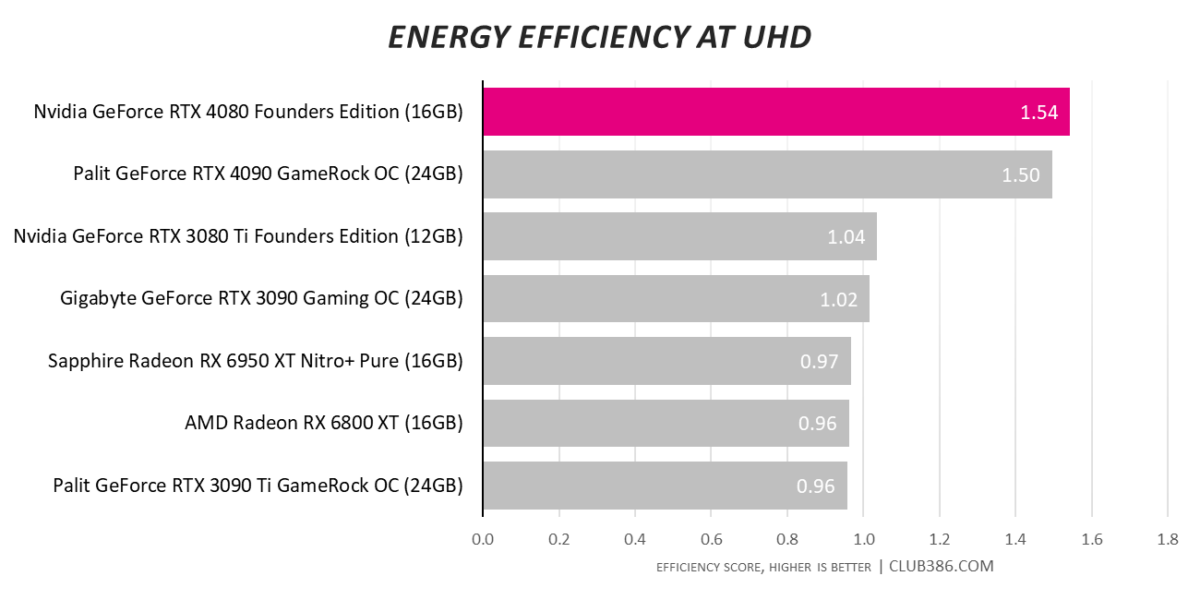

Things begin to get a whole lot more interesting when analysing other areas of the Ada Lovelace architecture. Efficiency, clearly, is a strong suit. RTX 4080 reduces system-wide power consumption to under 450 watts; a 27 per cent reduction over the RTX 3090 Ti it routinely beats in the performance stakes.

Another way to look at efficiency is to divide the average 4K UHD framerate across all tested titles by peak system-wide power consumption. 450W RTX 4090 previously topped the chart by virtue of sky-high performance; RTX 4080 claims the throne through a balance of excellent framerates and best-in-class power draw.

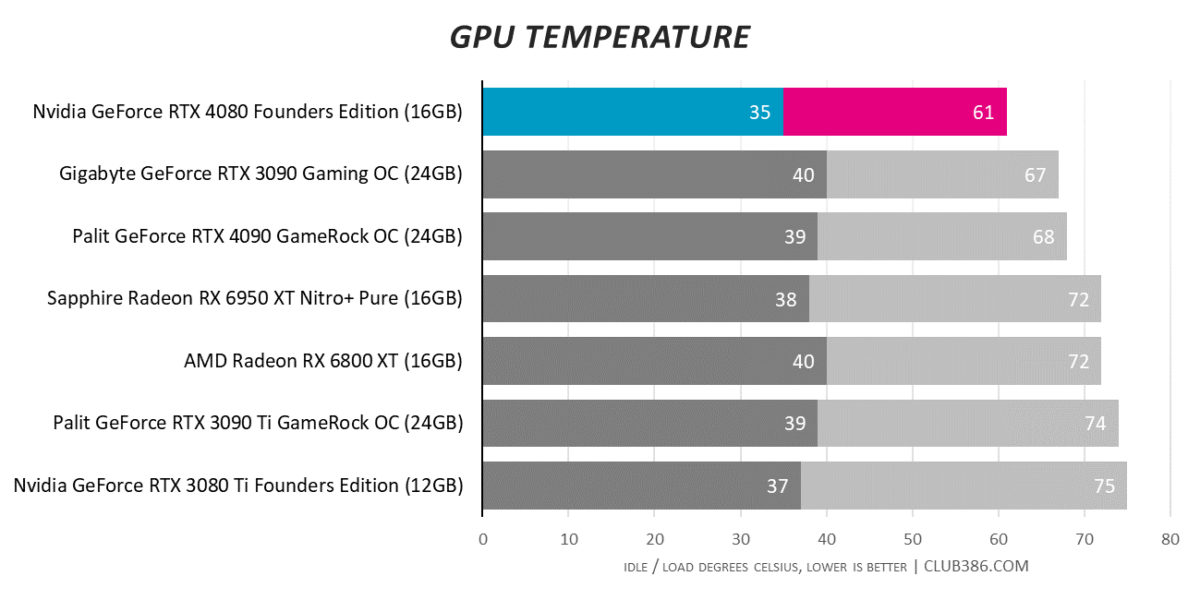

An efficient GPU strapped to a hulking triple-slot cooler can mean only one thing. Yep, you guessed it, this thing barely gets warm.

And what do you know, low temps mean minimal noise. Nvidia’s dual fans don’t bother kicking in until core temp reaches 60°C, and they do so smoothly and quietly. Save for the backlit GeForce RTX logo, it is not a stretch to state that with regular ambient noise you would never know the card was turned on.

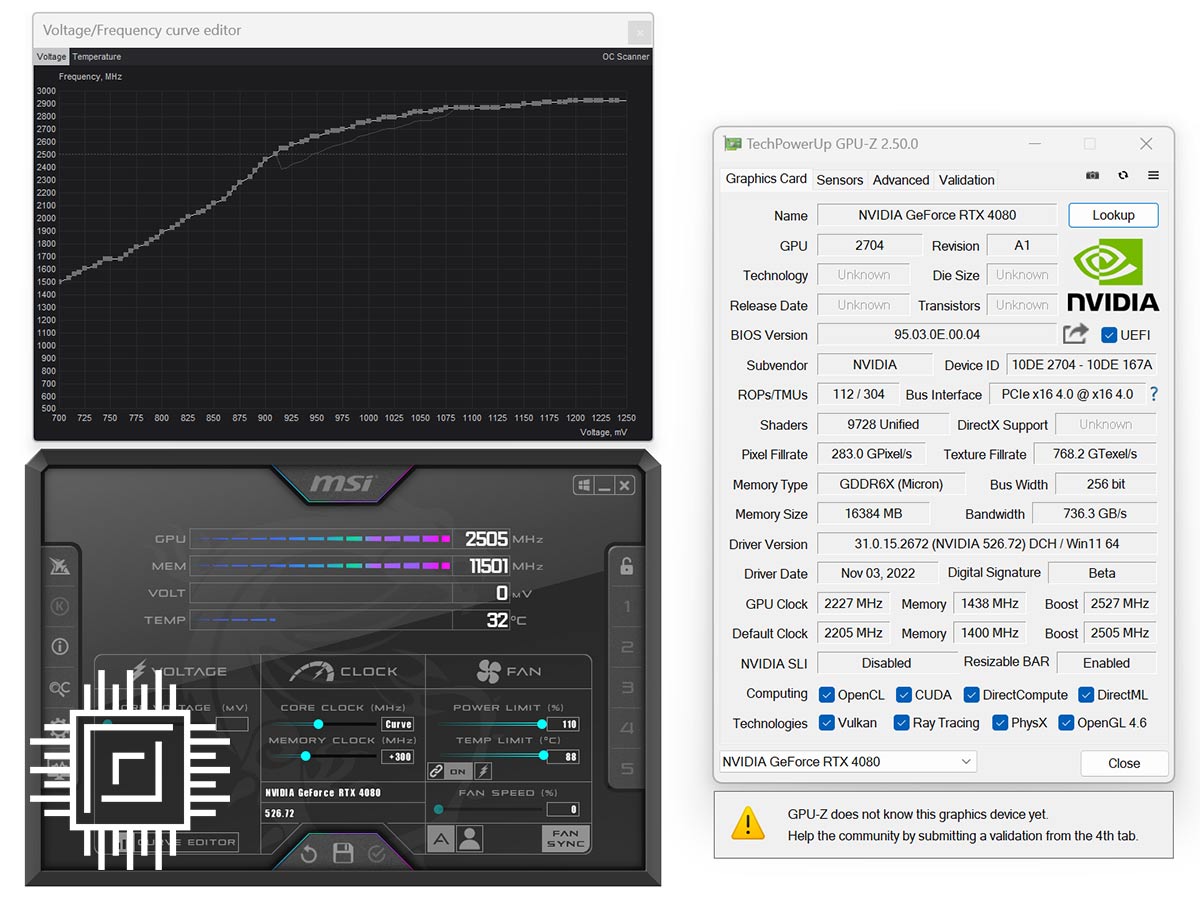

Overclocking

Further tinkering on such premium GPUs is not advisable, yet if you can’t help yourself, Afterburner’s automated OC scanner is a good place to start. Our sample exhibited little in the way of additional headroom. Memory climbed to 23Gbps while the core averaged an in-game 2.85GHz.

Hardly worth the effort for imperceptible gains, and haven’t you heard, there are newer, more efficient ways to increase performance.

DLSS 3

Having spent the past few decades evaluating GPUs in familiar fashion, it is eye-opening to witness a sea change in how graphics performance is both delivered and appraised.

Thunderous rasterisation and lightning RT Cores, yet DLSS 3 is the storm that’s brewing

RTX 40 Series may boast thunderous rasterisation and lightning RT Cores, yet DLSS 3 is the storm that’s brewing. Rejogging the memory banks after all those benchmarks, remember developers now have the option to enable individual controls for Super Resolution and/or Frame Generation. The former, as you’re no doubt aware, upscales image quality based on setting; Ultra Performance works its magic on a 1280×720 render, Performance on 1920×1080, Balanced works at 2227×1253, and Quality upscales from 2560×1440.

On top of that, Frame Generation inserts a synthesised frame between two rendered, resulting in multiple configuration options. Want the absolute maximum framerate? Switch Super Resolution to Ultra Performance and Frame Generation On, leading to a 720p upscale from which whole frames are also synthesised.

Want to avoid any upscaling but willing to live with frames generated from full-resolution renders? Then turn Super Resolution off and Frame Generation On. Note that enabling the latter automatically invokes Reflex; the latency-reducing tech is mandatory, once again reaffirming the fact that additional processing risks performance in other areas.

Reviewers have been granted access to pre-release versions of select titles incorporating DLSS 3 tech. Initial impressions are that developers are still getting to grips with implementation. Certain games require settings to be enabled in a particular sequence for DLSS 3 to properly enable, while others simply crash when alt-tabbing back to desktop. There are other limitations, too. DLSS 3 is not currently compatible with V-Sync (FreeSync and G-Sync are fine) and Frame Generation only works with DX12.

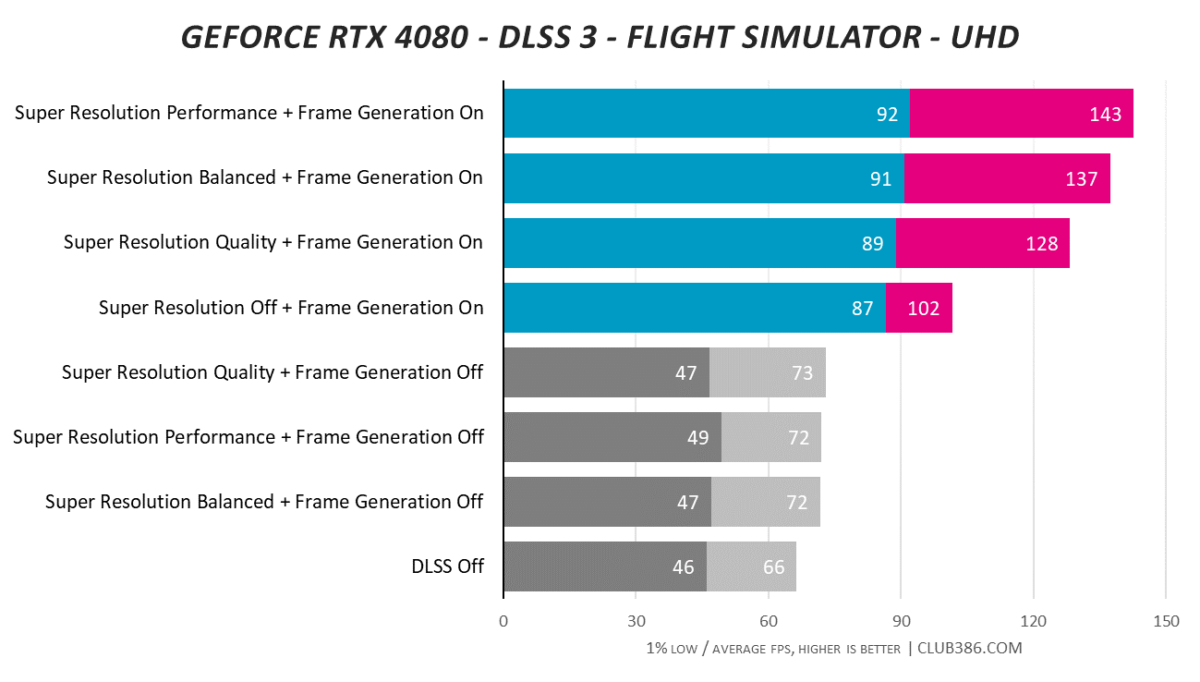

Point is, while Nvidia reckons uptake is strong – some 35 DLSS 3 games have already been announced – these are early days, and some implementations will work better than others. Microsoft Flight Simulator is one of the first to officially support DLSS 3 as part of Sim Update XI (SU11, pictured above), and the results are dramatic to say the least.

Performance is benchmarked at a 4K UHD resolution with Ultra rendering quality. With DLSS disabled entirely, RTX 4080 is able to return an average of 66 frames per second in the Sydney Famous Landing Challenge. The game’s tendency to be CPU bound is reflected by small framerate boosts with Super Resolution (DLSS 2) set to either quality, balanced or performance. There’s little extra mileage to be had.

Frame Generation (DLSS 3) has a far more profound impact. Turning it on boosts performance by a whopping 55 per cent, climbing to 94 per cent when Super Resolution is added to the mix at maximum quality.

Flight Simulator is an excellent example of where Frame Generation holds merit. This is a tech unlikely to be widely used by competitive gamers who rely on maximum accuracy, but in the right scenario, the framerate gains are simply too large to ignore. With the right monitor, the difference between 4K60 and 4K120 can be profound, and when done well there’s little detriment to image quality.

That isn’t to say it’s perfect – we did notice occasional flickering of elements in the cockpit – but you really have to look for artifacts to know they’re there. Implementation is impressive, even at this early stage, and Nvidia does well to keep latency in check through Reflex. With DLSS disabled entirely, in-game Flight Simulator latency is recorded as 54.2ms. This rises to 64.5ms with Frame Generation enabled, and then drops back down to 54.9ms with Frame Generation and Super Resolution at maximum quality.

It is too soon to deem DLSS 3 a must-have feature, yet make no mistake, it is an important feather in the 40 Series’ cap and one that rivals will soon attempt to replicate. Next-generation AMD Radeon will employ Fluid Motion Frames in an effort to double 4K framerate, but when exactly the tech will be available, and how well it performs, remains unknown.

Conclusion

Following up on the blockbuster performance of GeForce RTX 4090, Nvidia extends its assault on the high-end with GeForce RTX 4080. Starting at £1,269, the new addition cements RTX 40 Series’ positioning as the preserve of deep-pocketed enthusiasts, and even when a third GPU reappears, don’t expect much change from a thousand pounds for an alleged RTX 4070 Ti.

Premium products need to bring performance and features in spades, and the Ada Lovelace architecture delivers through a combination of lofty speed, unmatched raytracing capabilities and forward-looking DLSS. Continuing in that same vein, RTX 4080 touts in-game performance at roughly 77 per cent the level of head-honcho, RTX 4090.

QHD120 or 4K60 are well within the remit of a GPU that bests everything from the previous generation, all while sipping less power, and by employing the same hulking cooler across models, Nvidia ensures both temperature and noise are kept down to an absolute minimum. RTX 4080 lays down a marker in more ways than one. Over to you, Radeon RX 7900 XTX.